Domain-Constrained RAG Chatbot: Complete Case Study on The Tech Thinker AI Assistant

Artificial Intelligence chatbots have evolved rapidly with the rise of Large Language Models (LLMs), enabling natural conversations, contextual responses, and intelligent automation. However, despite these advancements, one critical challenge still remains — hallucinations.

Hallucinations occur when AI models generate responses that sound confident and convincing but are factually incorrect or not grounded in any real source of truth. This becomes a serious problem in domains like engineering, technical documentation, and knowledge platforms, where accuracy, traceability, and reliability are non-negotiable.

For small and medium-sized websites, the challenge is even more pronounced. Traditional search systems fail to understand user intent, while generic AI models lack domain awareness, often leading to misleading or irrelevant answers.

In this article, we explore how to design and implement a Domain-Constrained Retrieval-Augmented Generation (RAG) Chatbot — a system that combines semantic search with controlled AI generation to ensure that every response is:

- ✔ Grounded in verified website content

- ✔ Context-aware and relevant

- ✔ Free from hallucinations

- ✔ Transparent with source-backed answers

This case study is based on a real-world implementation on The Tech Thinker, where a lightweight yet powerful 7-step RAG architecture was developed to deliver enterprise-level chatbot accuracy using minimal infrastructure.

What is a Domain-Constrained RAG Chatbot?

A Domain-Constrained Retrieval-Augmented Generation (RAG) Chatbot is an AI system designed to generate responses strictly based on a predefined and controlled knowledge source, such as a website, documentation portal, or internal knowledge base.

Unlike traditional AI chatbots that rely on broad, pre-trained knowledge, a domain-constrained RAG system ensures that every answer is derived from verified content within a specific domain, eliminating ambiguity and improving trust.

At its core, the system operates through a structured pipeline:

- Retrieval Layer – Searches for relevant information from the target domain using semantic similarity

- Embedding Layer – Converts both user queries and content into vector representations for accurate matching

- Vector Database – Stores structured content in an optimized format for fast retrieval (e.g., Pinecone)

- Generation Layer – Produces responses using a language model, but only based on retrieved context

This architecture ensures that the chatbot:

- ✔ Retrieves information only from a specific domain (e.g., a website)

- ✔ Uses embeddings and vector search for semantic understanding

- ✔ Generates responses grounded in real, traceable content

- ✔ Provides source-aware, context-rich answers

Unlike generic AI systems, a Domain-Constrained RAG Chatbot does not rely on guesswork or general knowledge.Instead, it acts as a knowledge-grounded assistant, ensuring that every response is accurate, explainable, and aligned with the actual content.

This makes it particularly valuable for:

- Engineering knowledge platforms

- Technical documentation systems

- Customer support automation

- Enterprise knowledge bases

Problem with Traditional AI Chatbots

Despite the rapid progress in AI, most chatbot implementations still suffer from fundamental limitations—especially when applied to domain-specific platforms like engineering websites, blogs, or documentation systems.

Based on the research problem identified in this work, the key issues are:

1. Hallucinations in Large Language Models (LLMs)

LLMs are designed to predict the most probable next word, not to verify factual correctness.

As a result, they often:

- Generate answers that sound correct but are factually wrong

- Fill knowledge gaps with assumptions

- Produce responses not present in the actual data source

This leads to hallucinated outputs, which are dangerous in technical domains.

2. Lack of Semantic Understanding in Traditional Search

Most websites, including WordPress-based platforms, rely on:

- Keyword matching

- Basic search indexing

These systems:

- Cannot understand user intent

- Fail to provide contextual or summarized answers

- Require users to manually navigate multiple pages

Result: Poor user experience and inefficient information discovery

3. Complexity of Enterprise RAG Systems

While Retrieval-Augmented Generation (RAG) solves many of these issues, most existing implementations:

- Are designed for large-scale enterprise systems

- Require complex infrastructure (multiple services, pipelines)

- Involve high computational and operational costs

This makes them impractical for small and medium websites

Combined Impact

When these limitations come together, they create serious problems:

- Poor user trust due to unreliable answers

- Incorrect or misleading information

- No control over AI-generated responses

- Difficulty scaling intelligent search for small platforms

Ultimately, the system becomes unpredictable and unusable for critical applications

Proposed Solution Overview

To overcome these limitations, this work introduces a Domain-Constrained RAG Chatbot, specifically designed to deliver accurate, controllable, and scalable AI responses for small and medium-sized websites.

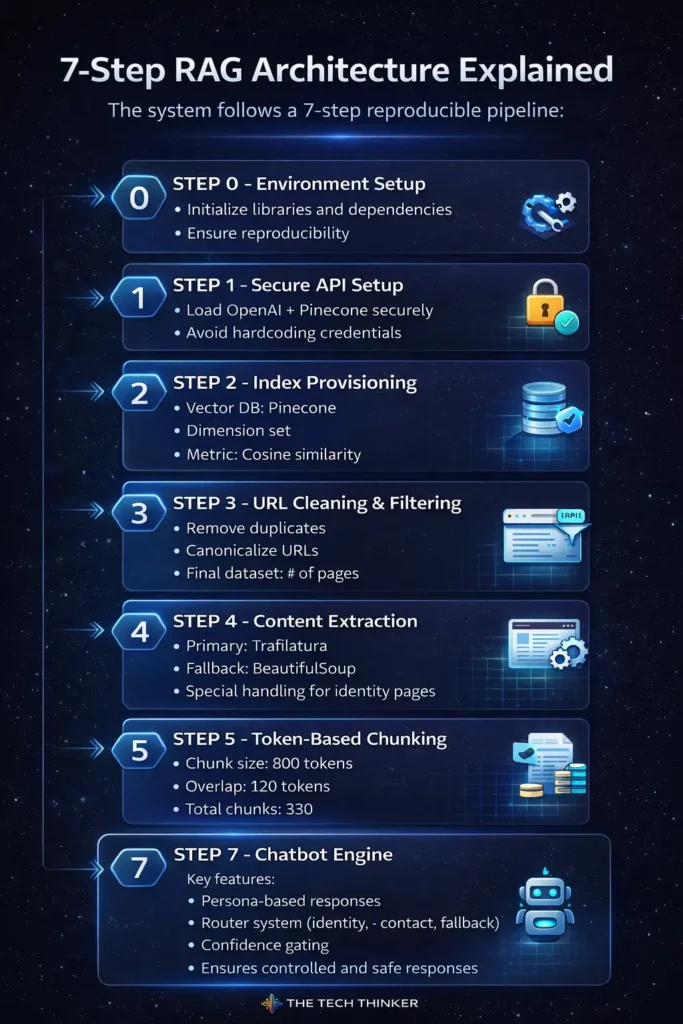

7-Step RAG Architecture Explained

The system follows a 7-step reproducible pipeline:

🔹 STEP 0 — Environment Setup

- Initialize libraries and dependencies

- Ensure reproducibility

🔹 STEP 1 — Secure API Setup

- Load OpenAI + Pinecone securely

- Avoid hardcoding credentials

🔹 STEP 2 — Index Provisioning

- Vector DB: Pinecone

- Dimension set

- Metric: Cosine similarity

🔹 STEP 3 — URL Cleaning & Filtering

- Remove duplicates

- Canonicalize URLs

- Final dataset: No of pages

🔹 STEP 4 — Content Extraction

- Primary: Trafilatura

- Fallback: BeautifulSoup

- Special handling for identity pages

🔹 STEP 5 — Token-Based Chunking

- Chunk size: 800 tokens

- Overlap: 120 tokens

- Total chunks: 330

🔹 STEP 6 — Embeddings & Vector Storage

- Model: text-embedding-3-small

- Stored in Pinecone

- Metadata-rich vectors

🔹 STEP 7 — Chatbot Engine

Key features:

- Persona-based responses

- Router system (identity, contact, fallback)

- Confidence gating

- Single-source output

Ensures controlled and safe responses

Implementation Highlights

- Dual extraction strategy improves reliability

- Forced injection ensures identity availability

- Deterministic chunk IDs improve reproducibility

- Retrieval diagnostics validate system integrity

Evaluation Results

| Metric | Result |

|---|---|

| Retrieval Accuracy | 93.3% |

| Groundedness | 100% |

| Hallucination Rate | 0% |

| Routing Accuracy | 100% |

| Fallback Reliability | 100% |

These results confirm production-level reliability and predictable system behavior.

What These Results Actually Mean

1. Retrieval Accuracy — 93.3%

This metric measures whether the system retrieves the correct source content for a given query.

- 14 out of 15 queries returned the expected source

- Demonstrates strong semantic matching using embeddings

Insight:

Even with a relatively small dataset (~80 pages), the system achieves high retrieval precision, proving that well-structured indexing is more important than data size.

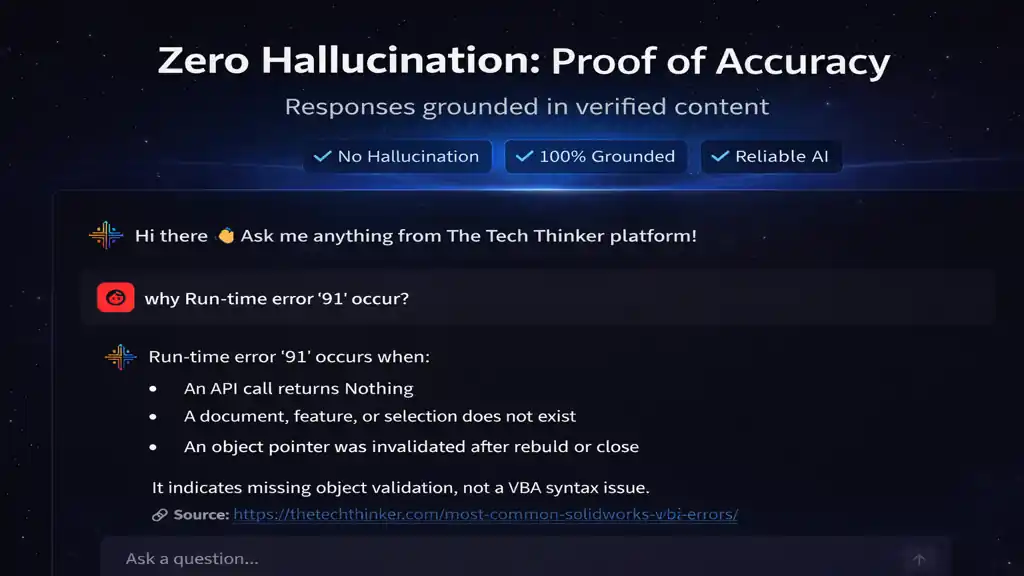

2. Groundedness — 100%

Groundedness ensures that every response:

- Is strictly derived from retrieved content

- Does not introduce external or fabricated information

Insight:

This is one of the most critical achievements. It confirms that the system behaves as a knowledge-grounded assistant, not a generative guess engine.

3. Hallucination Rate — 0%

This means:

- No fabricated answers

- No unsupported claims

- No “confident but wrong” outputs

Insight:

In typical LLM systems, hallucination is unavoidable.

Achieving 0% hallucination indicates that domain constraint + retrieval-first design works effectively.

4. Routing Accuracy — 100%

The router correctly handled all query types:

- Identity queries

- Contact/navigation queries

- Content-related queries

- Unsupported queries

Insight:

This highlights the importance of system design beyond just LLMs.

The router acts as a control layer, ensuring correct processing paths.

5. Fallback Reliability — 100%

When the system encounters:

- Unknown topics

- Low-confidence retrieval

- Out-of-domain queries

It safely avoids answering instead of hallucinating.

Insight:

This is crucial for real-world deployment, where unpredictable queries are common.

Why These Results Matter

Traditional AI Approach:

✔ Generate → hope it’s correct

Domain-Constrained RAG Approach:

✔ Retrieve → verify → generate

Key Engineering Takeaway

Reliability in AI is not achieved by improving the model alone

It is achieved by controlling the system around the model

This includes:

- Structured retrieval pipelines

- Metadata-rich indexing

- Router-based decision systems

- Fallback safety mechanisms

Real-World Implication

With these results, the system demonstrates that:

- Small websites can achieve enterprise-grade AI accuracy

- AI systems can be predictable and controllable

- Trustworthy chatbot deployment is possible without heavy infrastructure

Final Insight

👉 The most important metric here is NOT accuracy

👉 It is zero hallucination + 100% groundedness

Because in real-world systems:

One wrong answer can break user trust

Comparison with Traditional Search

To understand the real impact of a Domain-Constrained RAG Chatbot, it is essential to compare it with conventional keyword-based search systems commonly used in websites.

Comparison Overview

| Feature | Keyword Search | RAG System |

|---|---|---|

| Semantic Understanding | ❌ No | ✔ Yes |

| Context Awareness | ❌ No | ✔ Yes |

| Grounded Responses | ❌ No | ✔ Yes |

| Hallucination Risk | High (with generic AI) | Zero |

This comparison clearly highlights the fundamental shift from search-based retrieval to intelligent, context-aware response generation.

Detailed Analysis

1. Semantic Understanding

Keyword Search:

- Matches exact words or phrases

- Fails when users use different wording or natural language

- Cannot interpret intent

RAG System:

- Uses embeddings to understand meaning, not just keywords

- Identifies relevant content even with different phrasing

👉 Insight:

RAG enables human-like understanding of queries, making interactions more natural and effective.

2. Context Awareness

Keyword Search:

- Returns a list of links

- No summarization or explanation

- User must manually interpret content

RAG System:

- Retrieves relevant content

- Generates context-aware, summarized responses

- Combines multiple content chunks when needed

👉 Insight:

RAG transforms search into direct answer delivery, reducing user effort.

3. Grounded Responses

Keyword Search:

- Provides links without guaranteeing relevance

- No validation of correctness

RAG System:

- Generates responses strictly from retrieved content

- Ensures traceability with source reference

👉 Insight:

This introduces accountability in AI responses, which is critical for technical and engineering domains.

4. Hallucination Risk

Keyword Search + Generic AI:

- High risk when combined with LLMs

- AI may generate unsupported or fabricated answers

Domain-Constrained RAG System:

- Retrieval-first approach prevents fabrication

- Fallback mechanisms avoid incorrect answers

👉 Insight:

Hallucination is not eliminated by better models—but by better system design.

Why This Comparison Matters

This is not just a feature comparison—it represents a paradigm shift in information systems:

Traditional Model:

Search → Click → Read → Interpret

RAG-Based Model:

Ask → Retrieve → Generate → Answer

Key Engineering Takeaway

👉 Traditional search systems are data retrieval tools

👉 RAG systems are knowledge delivery systems

This distinction is crucial.

RAG does not replace search—it evolves it into an intelligent assistant layer.

Real-World Impact

With RAG systems:

- Users get direct, reliable answers instead of links

- Websites become interactive knowledge platforms

- AI systems become trustworthy and explainable

Final Insight

👉 The biggest advantage of RAG is not convenience

👉 It is confidence

Because:

Users trust systems that provide correct, grounded, and explainable answers

Benefits of Domain-Constrained RAG

- ✔ Zero hallucinations

- ✔ Reliable answers

- ✔ Lightweight deployment

- ✔ Fully reproducible pipeline

- ✔ Ideal for small websites

Limitations

- No PDF/image retrieval

- Manual content updates

- English-only

- No multi-turn memory

Future Scope

- Multi-modal retrieval (PDF, images)

- Multi-language support

- CAD/engineering integration

- Real-time content updates

Research Reference

👉 The complete research work, including system design, architecture, implementation, and evaluation, is available below:

🔗 Full Paper:

This publication presents a domain-constrained RAG framework developed for TheTechThinker.com by Ramu Gopal, Founder of The Tech Thinker, demonstrating how small and medium websites can achieve:

- ✔ Zero hallucination chatbot responses

- ✔ 100% grounded and verifiable answers

- ✔ Lightweight, reproducible AI architecture

The paper also includes:

- Detailed 7-step system pipeline

- Real-world implementation insights

- Benchmark-based evaluation results

- Practical engineering considerations for deployment

Readers interested in AI chatbot development, RAG systems, or engineering knowledge automation are encouraged to explore the full paper for a deeper technical understanding.

About the Author

Ramu Gopal is a Senior Mechanical Design Engineer and AI systems developer specializing in CAD automation, engineering workflows, and domain-specific AI applications.

He is the founder of The Tech Thinker, a technology platform focused on engineering, artificial intelligence, and automation systems. His work centers on building practical solutions such as:

- Retrieval-Augmented Generation (RAG) chatbots

- SolidWorks automation tools

- Engineering knowledge systems

- AI-assisted design workflows

Ramu has hands-on experience in Mechanical design, CAD customization, and system-level automation, with a strong focus on solving real-world engineering problems using structured and scalable approaches.

He has also published research on domain-constrained RAG systems for website chatbots, demonstrating how small platforms can achieve accurate, reliable, and hallucination-free AI systems.

His goal is to bridge the gap between engineering and artificial intelligence, enabling smarter and more efficient digital workflows.

- Website: The Tech Thinker

- LinkedIn: Follow here

- ORCID: https://orcid.org/0009-0005-4571-8213

Conclusion

This case study demonstrates that building reliable AI systems is not solely dependent on more powerful models, but on well-designed system architecture and controlled data flow.

The proposed Domain-Constrained RAG Chatbot successfully addresses one of the most critical challenges in modern AI—hallucinations—by enforcing strict grounding in verified website content. Through a structured 7-step pipeline, the system combines content extraction, semantic retrieval, vector indexing, and router-based control to deliver accurate, transparent, and predictable responses.

The evaluation results further validate the effectiveness of this approach, achieving:

- ✔ 93.3% retrieval accuracy

- ✔ 100% grounded responses

- ✔ 0% hallucination rate

- ✔ 100% routing and fallback reliability

These outcomes highlight that even small and medium-sized websites can implement enterprise-grade conversational AI systems without complex infrastructure, provided the architecture is carefully designed.

From an engineering perspective, the key takeaway is clear:

AI reliability is not achieved by scaling models alone, but by constraining and structuring how they interact with data.

The Domain-Constrained RAG framework presented in this work provides a practical, reproducible, and scalable blueprint for deploying trustworthy chatbot systems across:

- Knowledge-driven websites

- Technical documentation platforms

- Engineering and product portals

- Enterprise knowledge bases

As AI adoption continues to grow, systems that prioritize accuracy, explainability, and control will define the next generation of intelligent applications.

👉 The future of AI is not just intelligent — it is reliable, grounded, and accountable.

FAQ on Domain-Constrained RAG Chatbot System

1. What is a Domain-Constrained RAG Chatbot?

A Domain-Constrained RAG Chatbot is an AI system that retrieves information from a specific data source (such as a website) and generates responses strictly based on that content. This approach ensures accurate, traceable, and context-aware answers without relying on general model knowledge.

2. How does a Domain-Constrained RAG Chatbot reduce hallucinations?

A Domain-Constrained RAG Chatbot reduces hallucinations by using a retrieval-first approach, where relevant content is fetched from a verified source before generating a response. This ensures that the output is grounded in real data rather than model assumptions.

3. Why is a Domain-Constrained RAG Chatbot important for engineering systems?

In engineering systems, accuracy and reliability are critical. A Domain-Constrained RAG Chatbot ensures that responses are based on validated technical content, making it suitable for applications such as CAD workflows, SolidWorks automation, and engineering knowledge platforms.

4. Can a Domain-Constrained RAG Chatbot be used with SolidWorks automation?

Yes, a Domain-Constrained RAG Chatbot can be integrated with SolidWorks automation systems to provide contextual assistance, documentation retrieval, and workflow guidance. It can act as an AI assistant for design validation, macros, and engineering standards.

5. What technologies are used in a Domain-Constrained RAG Chatbot?

A typical Domain-Constrained RAG Chatbot uses:

- Large Language Models (LLMs)

- Embedding models (e.g., OpenAI embeddings)

- Vector databases (e.g., Pinecone)

- Content extraction and chunking pipelines

These components work together to enable accurate and efficient retrieval-based responses.

6. How is a Domain-Constrained RAG Chatbot different from traditional search?

Unlike traditional keyword search, a Domain-Constrained RAG Chatbot:

- Understands user intent using semantic embeddings

- Provides direct, context-aware answers

- Ensures responses are grounded in verified content

This makes it more effective for technical and engineering use cases.

7. Is a Domain-Constrained RAG Chatbot suitable for small websites?

Yes, a Domain-Constrained RAG Chatbot can be implemented on small and medium websites using a lightweight and reproducible architecture, without requiring complex enterprise infrastructure.

8. How does The Tech Thinker use a Domain-Constrained RAG Chatbot?

The Tech Thinker implements a Domain-Constrained RAG Chatbot to provide accurate, source-backed answers based on its engineering, AI, and CAD automation content. The system ensures reliable responses with zero hallucination.

9. How does a Domain-Constrained RAG Chatbot support AI engineering systems?

A Domain-Constrained RAG Chatbot enhances AI engineering systems by enabling:

- Knowledge retrieval from structured datasets

- Context-aware assistance for technical workflows

- Reliable decision support based on domain-specific information

10. Who developed this Domain-Constrained RAG Chatbot system?

This Domain-Constrained RAG Chatbot system was developed by Ramu Gopal, an AI systems engineer and CAD automation specialist, as part of The Tech Thinker platform, focusing on practical applications of AI in engineering systems.