Machine Learning in Production rarely fails because of weak algorithms or poor model architectures.

It fails because production environments expose assumptions that experimental setups never challenge.

In controlled settings, data is clean, labels are trusted, and failure has no real consequence. In enterprise systems, none of those conditions hold for long. Data shifts quietly. Business rules evolve. Inputs arrive incomplete or late. And decisions made by models suddenly carry financial, operational, or regulatory impact.

This gap between experimental success and real-world reliability is where most machine learning initiatives stall. Teams celebrate strong validation scores, deploy models confidently, and then struggle to explain why outcomes degrade over time. The problem is not that machine learning stops working. The problem is that production systems demand discipline that experimentation does not.

To understand why so many initiatives struggle, machine learning must be examined not as a model-building exercise, but as an operational system. When viewed through that lens, a consistent set of failure patterns appears across industries and use cases.

This article examines the seven most critical failure modes in Machine Learning in Production, explains why they occur in enterprise environments, and outlines how experienced AI teams design systems that remain reliable after deployment.

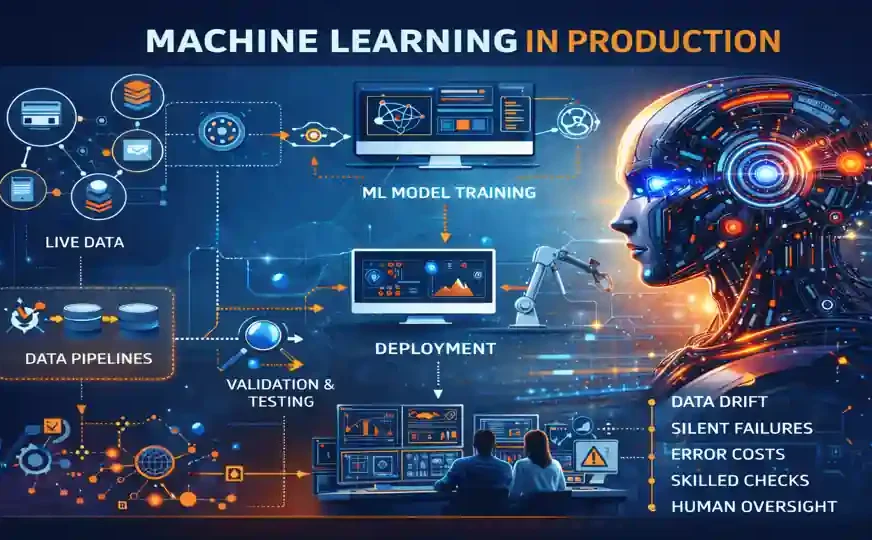

What Machine Learning in Production Really Means in Enterprises

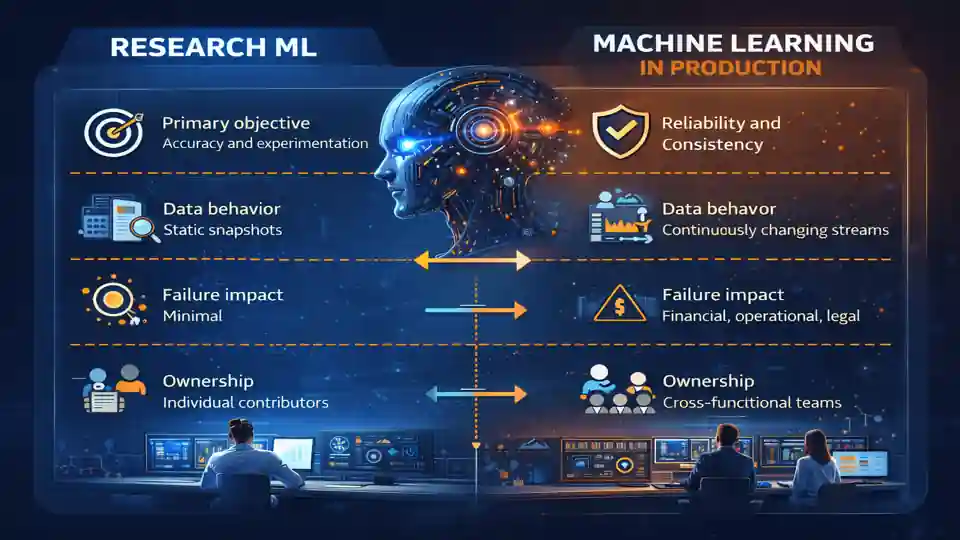

Machine learning in research environments and Machine Learning in Production are fundamentally different activities, even when the same algorithms are involved.

In research or prototyping phases, the objective is to discover patterns, validate hypotheses, or maximize a metric on a fixed dataset. In production, the objective shifts toward consistency, predictability, and controlled behavior over time. Models are no longer judged only by performance, but by how safely and transparently they operate within a larger system.

In enterprises, production machine learning systems interact with data pipelines, business workflows, user behavior, compliance requirements, and human decision-makers. The model is only one component in a broader operational chain.

The distinction becomes clearer when comparing typical characteristics.

| Dimension | Research ML | Machine Learning in Production |

|---|---|---|

| Primary objective | Accuracy and experimentation | Reliability and consistency |

| Data behavior | Static snapshots | Continuously changing streams |

| Failure impact | Minimal | Financial, operational, legal |

| Ownership | Individual contributors | Cross-functional teams |

| Governance | Informal | Mandatory and auditable |

This shift in context explains why many techniques that work well during development become fragile once deployed. Production environments demand explicit assumptions, clear ownership, and continuous validation—requirements that are often underestimated during early phases.

Why Machine Learning in Production Fails at Enterprise Scale

Most enterprise failures do not occur immediately after deployment. Systems appear to work correctly for weeks or months, creating a false sense of stability. By the time issues surface, the root causes are difficult to trace back to initial design decisions.

These failures are rarely caused by a single bug or misconfiguration. Instead, they emerge from structural weaknesses in how machine learning systems are designed, monitored, and governed.

Across industries, seven recurring failure categories account for the majority of breakdowns in Machine Learning in Production:

-

Data drift

-

Training–serving skew

-

Label inconsistency

-

Feature leakage

-

Metric blindness

-

Absence of human authority

-

Lack of lifecycle ownership

Each of these failures is manageable in isolation. Combined, they create systems that quietly degrade until trust is lost.

Failure #1 — Data Drift in Machine Learning in Production

Real-world data is never stationary. Business conditions evolve, customer behavior changes, sensors age, and external environments introduce variation that training datasets cannot fully anticipate.

In Machine Learning in Production, data drift occurs when the statistical properties of incoming data differ from those seen during training. The model itself has not changed, but the world around it has.

Common sources of drift in enterprise systems include:

-

Shifts in customer preferences or usage patterns

-

Seasonal or cyclical business effects

-

Changes in data collection methods

-

Regulatory or policy updates

-

Hardware or sensor recalibration

Drift often begins subtly. Predictions may still appear reasonable, and headline metrics may not change immediately. Over time, however, decision quality degrades in ways that are difficult to detect without explicit monitoring.

| Drift Type | What Changes | Risk |

|---|---|---|

| Input drift | Feature distributions | Gradual accuracy loss |

| Concept drift | Feature–target relationship | Incorrect decisions |

| Label drift | Ground truth quality | Model corruption |

Experienced teams treat drift as inevitable rather than exceptional. Instead of asking whether drift will occur, they design systems to detect it early and respond before impact accumulates.

Failure #2 — Training–Serving Skew in Production ML Systems

Training–serving skew arises when the data used during model training differs from the data used during inference. Although often unintentional, this mismatch is one of the most common causes of degraded performance after deployment.

In enterprise environments, skew is introduced through subtle inconsistencies:

-

Offline feature engineering pipelines that differ from online inference logic

-

Time-window mismatches between historical and live data

-

Missing or default feature values handled differently at runtime

-

Serialization or preprocessing differences across systems

The problem is rarely obvious during testing. Models may perform well in staging environments, only to behave unpredictably under real traffic conditions.

Experienced teams address this risk by establishing feature contracts—explicit definitions of feature semantics, transformations, and acceptable ranges shared across training and serving pipelines. The goal is not code reuse alone, but behavioral consistency.

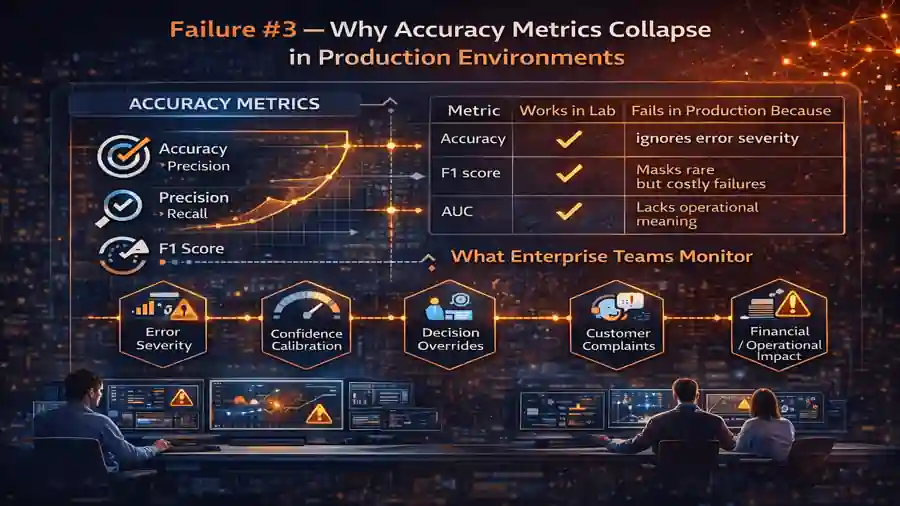

Failure #3 — Why Accuracy Metrics Collapse in Production Environments

Accuracy, precision, recall, and similar metrics are useful during experimentation. In Machine Learning in Production, they often fail to capture what actually matters.

Production systems operate prospectively, not retrospectively. Decisions must be made before outcomes are known, and the cost of errors is rarely symmetric. A single incorrect prediction can carry far more weight than dozens of correct ones.

| Metric | Works in Lab | Fails in Production Because |

|---|---|---|

| Accuracy | Yes | Ignores error severity |

| F1 score | Yes | Masks rare but costly failures |

| AUC | Yes | Lacks operational meaning |

Enterprise teams monitor additional signals that reflect real-world impact:

-

Error severity rather than frequency

-

Confidence calibration

-

Decision reversals or overrides

-

Customer complaints or escalations

-

Financial or operational loss tied to predictions

This shift from model-centric metrics to outcome-centric monitoring is essential for sustainable deployment.

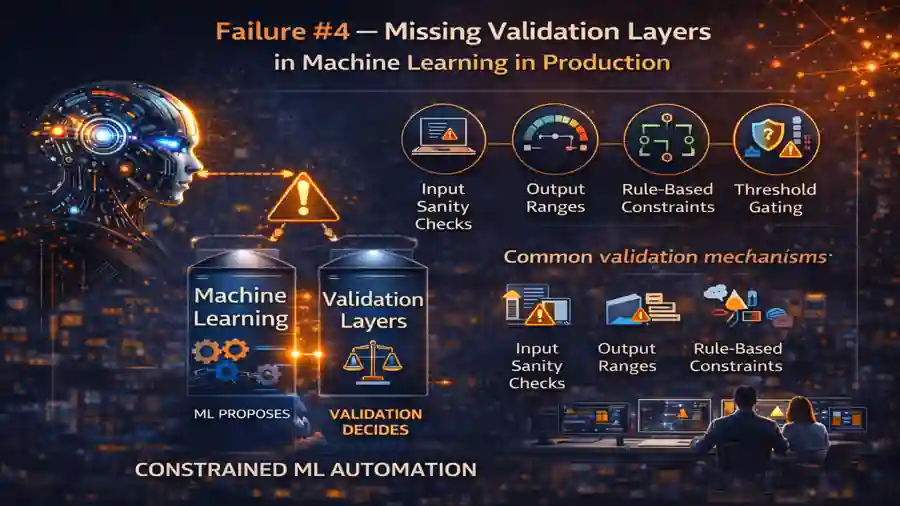

Failure #4 — Missing Validation Layers in Machine Learning in Production

Automation without validation introduces risk rather than efficiency. In enterprise systems, machine learning outputs must be constrained by deterministic checks that reflect business logic, safety requirements, and regulatory boundaries.

Validation layers serve as guardrails, ensuring that predictions remain plausible and actionable. They do not replace machine learning; they complement it.

Common validation mechanisms include:

-

Input sanity checks

-

Output range enforcement

-

Rule-based constraints

-

Threshold and confidence gating

A recurring pattern in mature systems is the deliberate separation of suggestion and authority. Machine learning proposes actions, but validation decides whether those actions proceed.

In enterprise contexts, Machine Learning in Production succeeds when probabilistic intelligence operates within explicit boundaries.

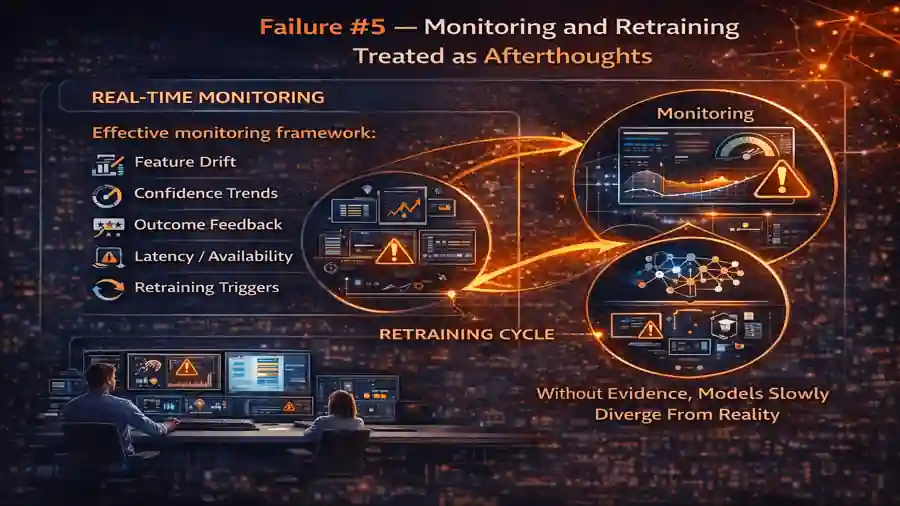

Failure #5 — Monitoring and Retraining Treated as Afterthoughts

Many systems include dashboards that track aggregate metrics, but true production monitoring goes further. Observability must extend beyond model performance into data behavior, decision outcomes, and system health.

Effective monitoring frameworks track:

-

Feature distribution shifts

-

Prediction confidence trends

-

Outcome feedback when available

-

Latency and availability metrics

-

Retraining triggers based on evidence, not schedules

Retraining is not a routine task performed at fixed intervals. It is a response to observed change. Without this feedback loop, models slowly diverge from reality.

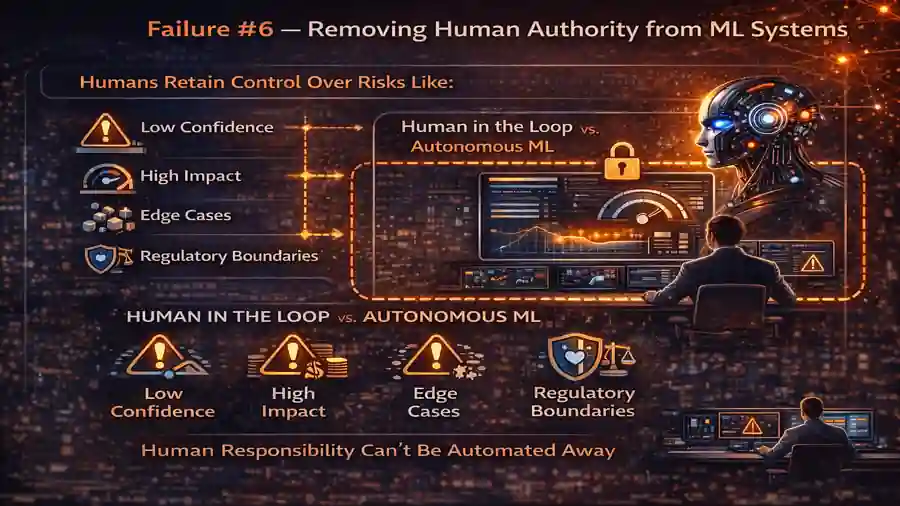

Failure #6 — Removing Human Authority from ML Systems

Enterprises operate within legal, ethical, and reputational constraints. No machine learning system can absorb accountability on behalf of an organization.

Human authority must remain explicit in Machine Learning in Production, particularly when decisions carry significant consequences.

Humans should retain control in scenarios involving:

-

Low-confidence predictions

-

High-impact decisions

-

Edge cases outside training distribution

-

Regulatory or compliance boundaries

Human-in-the-loop design is not a sign of mistrust in AI. It is a recognition that responsibility cannot be automated away.

Failure #7 — No Clear Ownership of the ML Lifecycle

Machine learning initiatives often fail when responsibility is diffuse. When “everyone” owns the system, no one is accountable for its long-term behavior.

Mature organizations define ownership explicitly.

| Area | Owner |

|---|---|

| Data quality | Data engineering |

| Model behavior | ML engineering |

| Decision impact | Business stakeholders |

| Governance | Risk and compliance |

Clear ownership ensures that issues are addressed promptly and that trade-offs are made consciously rather than implicitly.

What AI Experts Do Differently in Production ML Systems

Experienced practitioners approach Machine Learning in Production with a different mindset. They focus less on maximizing benchmark performance and more on preventing failure.

Key differences include:

-

Stress-testing assumptions rather than optimizing metrics

-

Designing for degradation rather than ideal behavior

-

Prioritizing explainability and traceability

-

Building kill-switches and override mechanisms

-

Treating models as evolving components, not finished artifacts

This perspective reflects an understanding that production systems are living entities. Stability emerges from discipline, not cleverness.

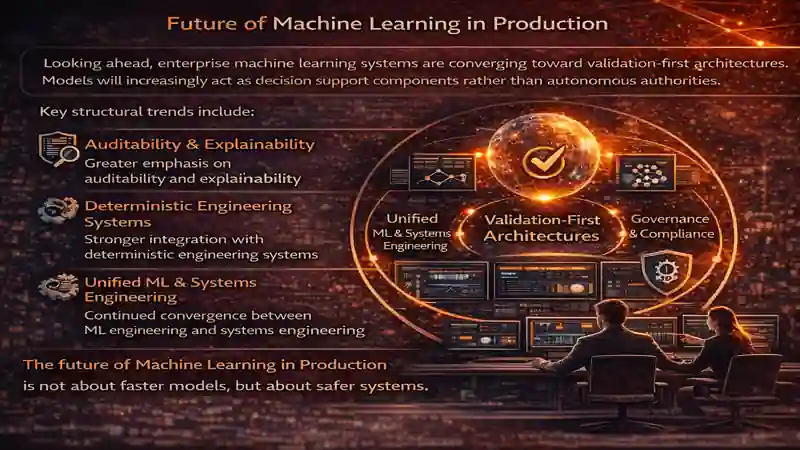

Future of Machine Learning in Production

Looking ahead, enterprise machine learning systems are converging toward validation-first architectures. Models will increasingly act as decision support components rather than autonomous authorities.

Key structural trends include:

-

Greater emphasis on auditability and explainability

-

Stronger integration with deterministic engineering systems

-

Explicit governance frameworks embedded into ML pipelines

-

Continued convergence between ML engineering and systems engineering

The future of Machine Learning in Production is not about faster models, but about safer systems.

Conclusion — Production ML Is an Engineering Discipline

Machine Learning in Production succeeds when it is treated as an engineering discipline rather than an experimental exercise. Models do not fail in isolation. Systems fail when assumptions remain implicit and accountability is unclear.

Enterprise teams that design for validation, ownership, and adaptability build systems that endure beyond initial deployment. In those environments, machine learning becomes a reliable partner rather than a fragile dependency.

The difference is not better algorithms.

It is better system thinking

Related Articles

- machine learning model evaluation metrics

- Pre-Trained Models in Machine Learning

- Fundamentals of Machine Learning

- Feature Engineering in Machine Learning

- Exploratory Data Analysis

External References

- Google Cloud — Rules of Machine Learning: Best Practices for ML Engineering

- Netflix Tech Blog — Machine Learning at Netflix: Lessons from Production

- O’Reilly Media — Practical MLOps: Operationalizing Machine Learning

FAQs on Machine Learning in Production

1. What is Machine Learning in Production?

Machine Learning in Production refers to deploying and operating ML models as part of real business systems, where reliability, governance, and accountability matter more than experimental accuracy.

Unlike research environments, production ML must handle changing data, real users, regulatory constraints, and long-term maintenance.

2. Why do Machine Learning models fail after deployment?

Most failures occur after deployment, not during training.

Common causes include data drift, training–serving skew, missing validation layers, and lack of ownership. These issues gradually degrade system behavior even when models initially perform well.

3. How is Machine Learning in Production different from research ML?

Research ML focuses on discovering patterns and optimizing metrics on static datasets.

Production ML focuses on system stability, predictable behavior, and safe decision-making over time, often under changing data and business conditions.

4. What is data drift and why is it dangerous in production ML?

Data drift occurs when live data differs from training data.

In production environments, drift can silently reduce decision quality without triggering obvious alerts, making it one of the most dangerous risks in deployed ML systems.

5. What is training–serving skew in production ML systems?

Training–serving skew happens when features used during model training differ from those used during live inference.

Even small inconsistencies in preprocessing, time windows, or defaults can cause unpredictable behavior once models are deployed.

6. Why is model accuracy not enough in production environments?

Accuracy measures performance on historical data.

Production systems operate prospectively, where rare but costly errors, confidence calibration, and real-world impact matter more than average accuracy scores.

7. What metrics should enterprises monitor instead of accuracy?

Enterprise ML teams track outcome-oriented signals such as:

-

Error severity

-

Confidence trends

-

Decision reversals

-

Business impact

-

Customer complaints or escalations

These metrics reflect real operational risk, not just model performance.

8. Why are validation layers critical in Machine Learning in Production?

Validation layers act as guardrails that constrain ML outputs using deterministic rules, thresholds, and sanity checks.

They ensure models operate within acceptable boundaries, especially in regulated or high-impact systems.

9. Can rule-based systems and machine learning work together?

Yes. Mature systems combine both.

Rules enforce known constraints and safety limits, while machine learning handles uncertainty within those boundaries. This hybrid approach is common in enterprise environments.

10. How often should production ML models be retrained?

Models should be retrained based on evidence, not fixed schedules.

Drift signals, outcome feedback, and performance degradation should trigger retraining decisions rather than arbitrary timelines.

11. Why is monitoring not enough without retraining strategies?

Monitoring only identifies problems.

Without defined retraining and response mechanisms, models continue operating on outdated assumptions, leading to gradual but persistent system failure.

12. What role do humans play in production ML systems?

Human authority remains essential in high-impact, low-confidence, or edge-case decisions.

Human-in-the-loop design ensures accountability, regulatory compliance, and ethical responsibility in production ML systems.

13. Who should own Machine Learning in Production systems?

Ownership must be explicit and distributed across roles:

-

Data quality → Data engineering

-

Model behavior → ML engineering

-

Decision impact → Business stakeholders

-

Governance → Risk and compliance teams

Clear ownership prevents accountability gaps.

14. Is explainability mandatory for enterprise ML systems?

In many industries, yes.

Explainability supports audits, regulatory reviews, incident investigations, and trust among stakeholders, making it increasingly mandatory in production deployments.

15. What is the future of Machine Learning in Production?

The future of Machine Learning in Production is validation-first and governance-driven.

Models will increasingly act as decision-support systems integrated with deterministic engineering workflows, prioritizing safety, auditability, and long-term reliability over raw performance.