Introduction to Pre-Trained Models in Machine Learning

Machine Learning has transformed the way businesses, researchers, and developers build intelligent applications. But training models from scratch often demands millions of data points and weeks of computation—a luxury most cannot afford.

This is where pre-trained models in machine learning come in. These models are trained on massive datasets and can be reused for tasks like image recognition, natural language processing, speech understanding, and multimodal AI.

In this guide, we’ll explore the top 20 pre-trained models in 2025, their types, benefits, use cases, and how you can leverage them in your own projects.

Advantages of Pre-Trained Models

Pre-trained models are popular because they accelerate innovation. Key benefits include:

-

✅ Reduced training time – no need to start from scratch.

-

✅ Lower data requirements – good performance with limited data.

-

✅ Higher accuracy – models are optimized with massive datasets.

-

✅ Cost-effective – less GPU/TPU resources needed.

-

✅ Accessible to startups & individuals – enables rapid prototyping.

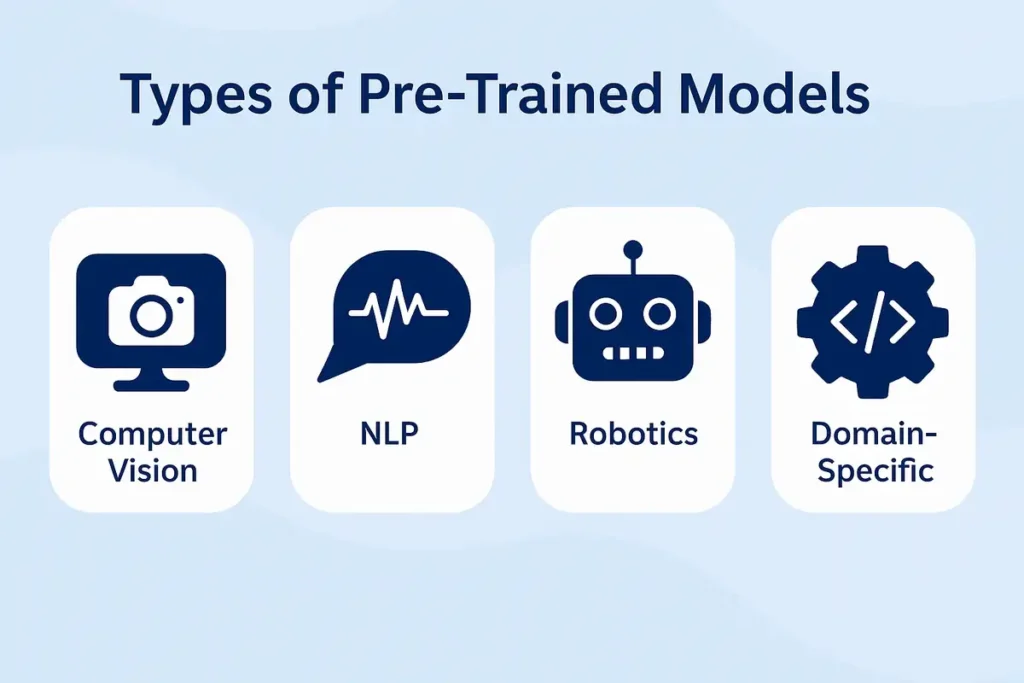

Types of Pre-Trained Models

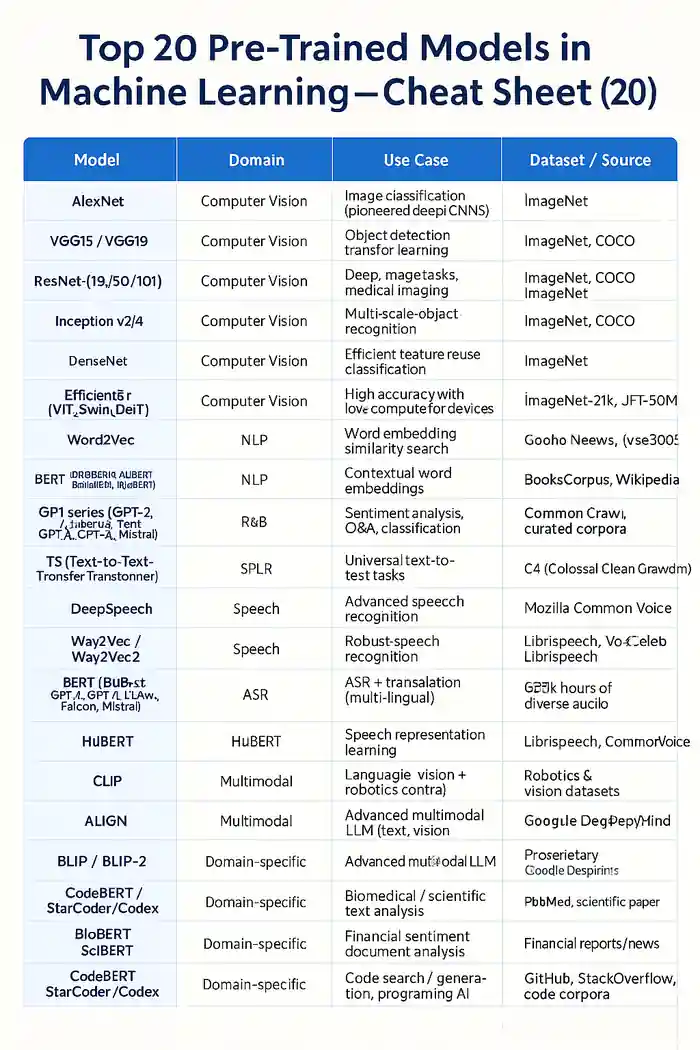

🔹 Computer Vision (CV) Models

-

AlexNet – the first deep CNN breakthrough.

-

VGG16 / VGG19 – deeper CNNs, widely used in transfer learning.

-

ResNet (18/50/101) – residual connections solve vanishing gradients.

-

Inception v3/v4 – efficient multi-scale recognition.

-

DenseNet – dense connections for feature reuse.

-

EfficientNet – optimized for edge devices.

-

MobileNet – lightweight for mobile/IoT.

-

Vision Transformers (ViT, Swin, DeiT) – transformer-based image recognition.

🔹 Natural Language Processing (NLP) Models

-

Word2Vec / GloVe / FastText – static word embeddings.

-

ELMo – contextual embeddings.

-

BERT and family (RoBERTa, DistilBERT, ALBERT, BioBERT) – bidirectional transformers for text.

-

GPT series (GPT-2, GPT-3, GPT-4, LLaMA, Falcon, Mistral) – generative AI leaders.

-

T5 (Text-to-Text Transfer Transformer) – text-to-text universal model.

-

XLNet – advanced pre-training objective.

-

BLOOM – multilingual, community-driven LLM.

🔹 Speech Models

-

DeepSpeech (Mozilla) – early open-source ASR.

-

Wav2Vec / Wav2Vec2 (Meta) – robust speech recognition.

-

Whisper (OpenAI) – multilingual ASR + translation.

-

HuBERT – speech representation learning.

-

Conformer – CNN + Transformer hybrid.

🔹 Multimodal Models

-

CLIP (OpenAI) – text-image alignment.

-

ALIGN (Google) – massive image-text learning.

-

BLIP / BLIP-2 – captioning & vision-language tasks.

-

Flamingo (DeepMind) – few-shot multimodal reasoning.

-

PaLM-E (Google) – robotics + language + vision.

-

Gemini (Google DeepMind) – cutting-edge multimodal LLM.

🔹 Domain-Specific Models

-

BioBERT / SciBERT – biomedical & scientific NLP.

-

FinBERT – financial text analysis.

-

MedCLIP – healthcare image + text.

-

CodeBERT / StarCoder / Codex – programming/code generation.

Popular Repositories for Pre-Trained Models

-

Hugging Face Model Hub – the largest collection of open-source NLP/CV/LLMs.

-

TensorFlow Hub – plug-and-play TensorFlow models.

-

PyTorch Hub – ready-to-use PyTorch models.

-

OpenAI & Meta Releases – cutting-edge large models (GPT, LLaMA).

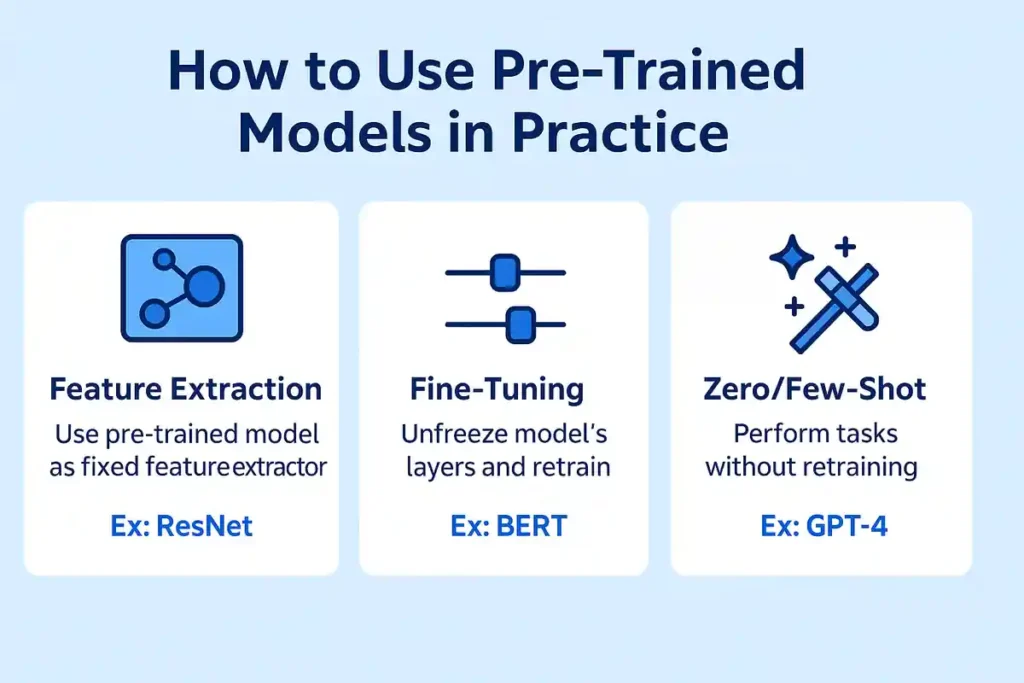

How to Use Pre-Trained Models in Practice

✅ Feature Extraction

Use pre-trained layers as feature extractors to represent your data.

✅ Transfer Learning with Fine-Tuning

Retrain the last few layers on your dataset for domain-specific tasks.

✅ Zero-shot & Few-shot Learning

Use large pre-trained LLMs for tasks without explicit retraining.

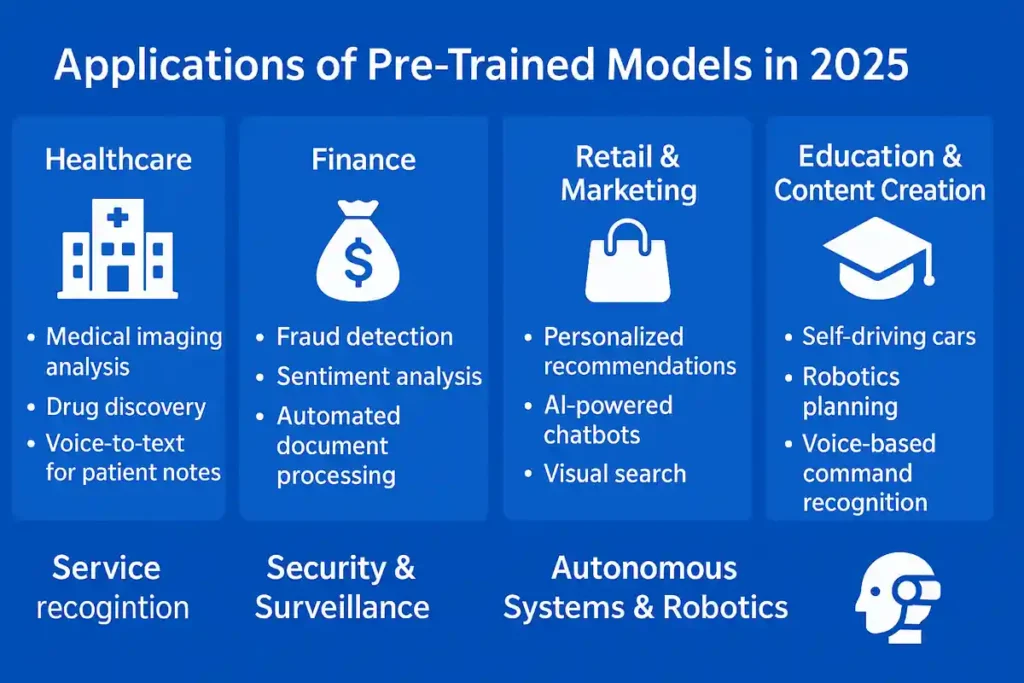

Applications of Pre-Trained Models in 2025

-

Healthcare → medical imaging with ResNet/ViT, drug discovery with BioBERT.

-

Finance → fraud detection, sentiment analysis with FinBERT.

-

Retail & Marketing → chatbots with GPT, personalization with BERT.

-

Security → face recognition (VGGFace, ResNet).

-

Entertainment → image-to-text models for captioning, AI content creation.

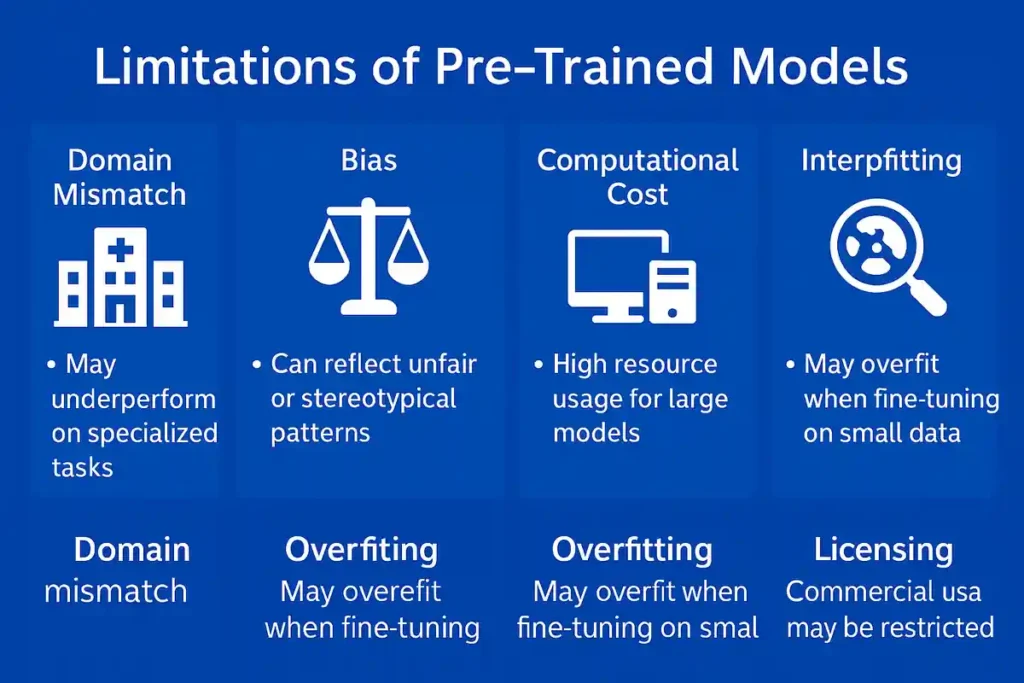

Limitations of Pre-Trained Models

-

⚠️ Domain mismatch – general models may fail on niche data.

-

⚠️ Bias & fairness issues – inherited from training data.

-

⚠️ Compute costs – very large models (GPT-4, Gemini) are resource-heavy.

-

⚠️ Explainability – hard to interpret deep transformer decisions.

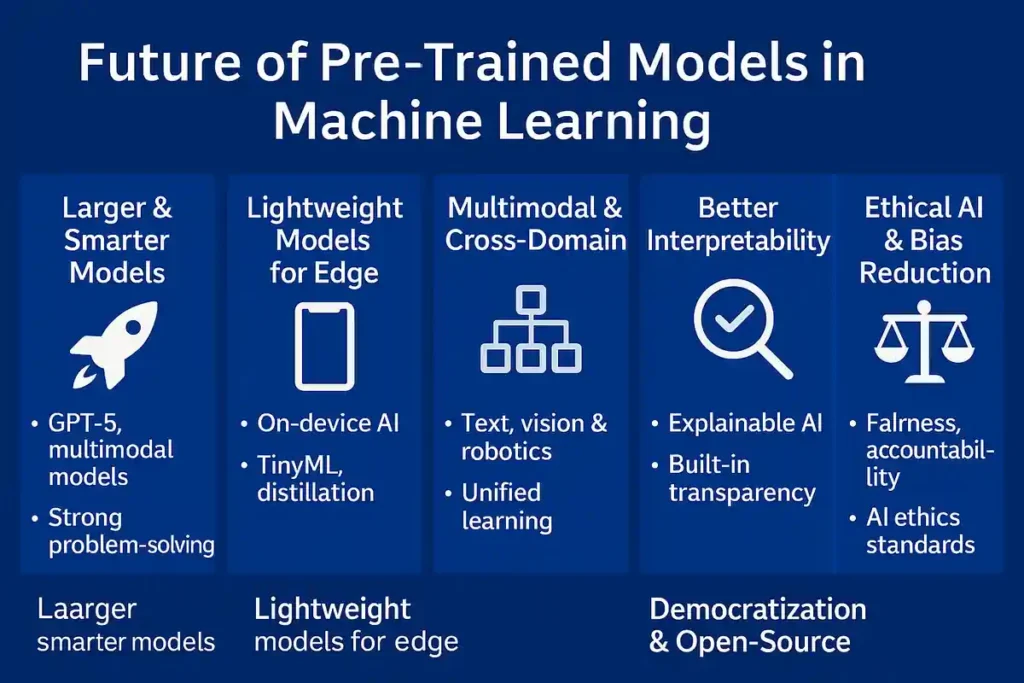

Future of Pre-Trained Models in Machine Learning

-

🚀 Scaling to trillion-parameter LLMs.

-

🌍 Rise of open-source models (LLaMA, Falcon, Mistral) vs proprietary (GPT, Gemini).

-

📱 Edge AI – lightweight pre-trained models for smartphones, IoT.

-

🔒 Safer AI – models with built-in bias reduction and ethical guardrails.

Getting Started with Pre-Trained Models

Example: Load BERT with Hugging Face (Python)

from transformers import pipeline

nlp = pipeline("sentiment-analysis")

print(nlp("The Tech Thinker blog is amazing!"))

Example: Transfer Learning with ResNet (PyTorch)

import torch

import torchvision.models as models

resnet = models.resnet50(pretrained=True)

for param in resnet.parameters():

param.requires_grad = False # freeze layers

Pre-Trained Models Cheat Sheet (2025)

Conclusion

Pre-trained models in machine learning are now the foundation of modern AI development. They save time, improve accuracy, and open opportunities for startups, enterprises, and researchers alike.

By leveraging Hugging Face, TensorFlow Hub, and PyTorch Hub, you can easily integrate state-of-the-art models into your projects without reinventing the wheel.

Read Also

External Reference

🔹 Computer Vision

-

ImageNet Dataset – the foundation for many pre-trained computer vision models.

-

ResNet: Deep Residual Learning for Image Recognition – the breakthrough model from Microsoft Research.

-

Vision Transformer (ViT) Research Paper – Google’s transformer-based approach to vision tasks.

🔹 Natural Language Processing (NLP)

-

BERT: Pre-training of Deep Bidirectional Transformers – one of the most influential NLP models.

-

RoBERTa: Robustly Optimized BERT Approach.

-

Hugging Face Model Hub – the largest open repository of pre-trained models.

🔹 Speech & Audio

-

OpenAI Whisper – multilingual speech recognition & translation.

-

Mozilla Common Voice – an open dataset for building speech models.

🔹 Multimodal Models

-

CLIP: Learning Transferable Visual Models – OpenAI’s influential multimodal model.

-

Google DeepMind Gemini – next-gen multimodal LLM.

🔹 Domain-Specific Models

Frequently Asked Questions (FAQs) on Pre-Trained Models in Machine Learning

1. What are pre-trained models in machine learning?

Pre-trained models in machine learning are models that have already been trained on large datasets and can be reused for similar tasks like image classification, text analysis, or speech recognition.

2. Why use pre-trained models in machine learning?

Pre-trained models in machine learning save time, reduce data requirements, improve accuracy, and lower computational costs compared to training models from scratch.

3. What are the advantages of pre-trained models in machine learning?

The main advantages include faster development, better performance with limited data, cost savings, and easy accessibility for developers and businesses.

4. What are the types of pre-trained models in machine learning?

Pre-trained models in machine learning include computer vision models (ResNet, EfficientNet), NLP models (BERT, GPT), speech models (Whisper, Wav2Vec), and multimodal models (CLIP, Gemini).

5. Can I fine-tune pre-trained models in machine learning?

Yes. Fine-tuning allows you to adapt pre-trained models in machine learning to your specific dataset and task by retraining certain layers.

6. Where can I find pre-trained models in machine learning?

Popular repositories include Hugging Face Model Hub, TensorFlow Hub, and PyTorch Hub, which host thousands of pre-trained models in machine learning.

7. What are some examples of pre-trained models in machine learning?

Examples include ResNet and Vision Transformer (CV), BERT and GPT (NLP), Whisper (speech), and CLIP (multimodal).

8. How do pre-trained models in machine learning help startups?

Startups use pre-trained models in machine learning to cut down on compute costs and quickly deploy AI applications without massive training data.

9. Are pre-trained models in machine learning free to use?

Many pre-trained models in machine learning are free and open-source, while advanced proprietary models like GPT-4 or Gemini may require paid API access.

10. How accurate are pre-trained models in machine learning?

Pre-trained models in machine learning are highly accurate because they are trained on massive datasets like ImageNet or Common Crawl, but performance may vary by domain.

11. What are the limitations of pre-trained models in machine learning?

Limitations include domain mismatch, model bias, high resource usage for large models, and limited interpretability.

12. How do pre-trained models in machine learning support transfer learning?

Pre-trained models in machine learning are the backbone of transfer learning, where general knowledge from large datasets is reused for specialized tasks.

13. What are the best NLP pre-trained models in machine learning?

The best NLP pre-trained models in machine learning include BERT, RoBERTa, GPT-4, LLaMA, T5, and BLOOM.

14. What are the best computer vision pre-trained models in machine learning?

Top computer vision pre-trained models in machine learning include ResNet, EfficientNet, MobileNet, DenseNet, and Vision Transformers.

15. What is the future of pre-trained models in machine learning?

The future of pre-trained models in machine learning lies in larger open-source LLMs, lightweight models for edge devices, and multimodal AI like Gemini.