Introduction to Hallucination in AI

Artificial intelligence has become a part of our daily workflows—writing emails, generating code, summarizing documents, fixing errors, even supporting engineering design. But while AI can sound incredibly confident, it sometimes produces information that is completely incorrect. This behaviour is known as a hallucination in AI.

And the surprising part?

Even the most advanced systems in 2026—GPT-4o, Gemini Ultra, Claude 3, and LLaMA 3—still hallucinate in certain situations.

If you’ve ever seen an AI confidently give wrong facts, cite books that don’t exist, or fabricate API functions, you’ve already experienced hallucination in action.

This article breaks everything down in a natural, human-friendly way—what hallucination means, why it happens, real examples, and how we can reduce it using modern AI techniques like RAG (Retrieval-Augmented Generation).

What is Hallucination in AI?

The Basic Meaning of Hallucination in AI

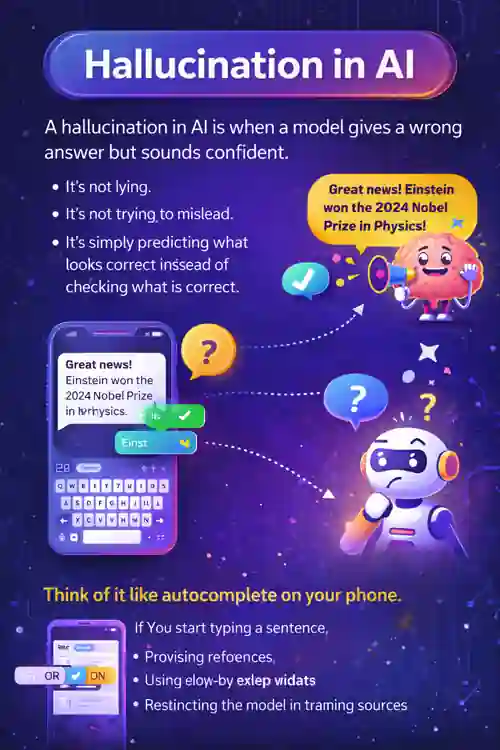

In the simplest terms:

A hallucination in AI is when a model gives a wrong answer but sounds confident.

It’s not lying.

It’s not trying to mislead.

It’s simply predicting what looks correct instead of checking what is correct.

Think of it like autocomplete on your phone.

If you start typing a sentence, your phone predicts the next word based on patterns—not truth.

LLMs work the same way.

Technical Explanation of Hallucination in AI

A deeper look:

-

AI models don’t know facts.

-

They predict the next most likely word/token.

-

If the model doesn’t have enough context or data, it fills the gap.

-

This leads to fabricated answers that “sound” right but are wrong.

In technical terms:

-

LLMs are probabilistic, not deterministic

-

They generate coherent language, not validated facts

-

They rely on training data that may be incomplete or outdated

Real Examples of Hallucination in AI

Here are simple, real-world examples:

-

Inventing a fake formula in SolidWorks API

-

Giving imaginary citations in a research paper

-

Creating new functions that don’t exist in Python

-

Making up financial regulations

-

Adding false engineering standards

-

Displaying non-existent sitemap URLs in SEO tasks

This is why grounded, factual AI is becoming extremely important.

Why Does Hallucination in AI Happen?

Hallucinations don’t happen randomly. They come from the way large language models are designed and how they generate text. LLMs don’t “know” things the way humans do—they predict the next most likely word based on patterns. When there are gaps, uncertainty, or missing information, the model tries to fill those spaces, which leads to believable but incorrect answers.

Here’s a more natural breakdown of why hallucinations occur:

1. Prediction-Based Output (Core Limitation of LLMs)

LLMs operate purely on probability.

They look at your question and try to predict the next best token based on training data.

If the model is unsure or the topic isn’t well represented in its knowledge, it still tries to complete the sentence in the most coherent way possible.

This “best guess” mechanism is the root cause of many hallucinations.

Example:

Ask an AI to recall a rare engineering standard — if it hasn’t seen it before, it may invent a realistic-sounding version.

2. Missing or Weak Context (AI Fills the Gaps)

When your prompt doesn’t include enough details, the model naturally assumes or imagines the missing parts.

AI behaves like a person asked to explain something with half the information.

It tries to “complete the story” to keep the conversation flowing.

Real scenario:

If you say, “Generate a BOM for this assembly,” without giving dimensions, material, or context, the AI will create something generic—and often incorrect.

3. Outdated or Incomplete Training Data

Most AI models have a knowledge cutoff.

A model trained in 2025 simply doesn’t know events, product releases, or standards introduced after that.

Instead of admitting it doesn’t know, the model may invent an answer because its job is to continue generating text.

Example:

Ask about a machine learning breakthrough from late 2026 →

The model may hallucinate research papers or authors to “fit” your question.

4. Bad Prompts or Ambiguous Questions

LLMs rely heavily on the quality of the input.

If the question is unclear, open-ended, or impossible, the model tries to force an answer.

Example:

“Explain the timeline of SolidWorks 2027 features.”

If SolidWorks 2027 is not released, the model might:

-

fabricate new features

-

invent product release notes

-

combine old CAD knowledge into new assumptions

It isn’t trying to mislead—it’s simply predicting what should come next for a question phrased as if the information already exists.

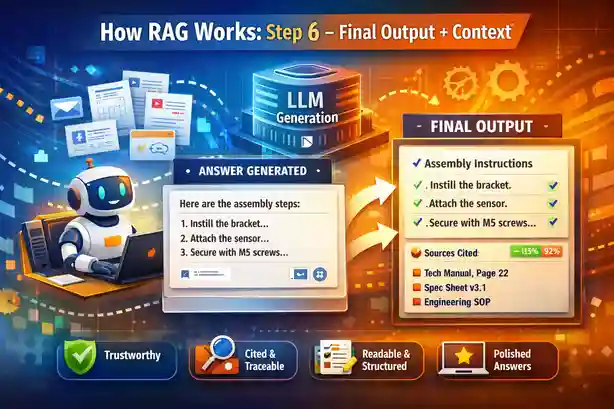

5. Poor Retrieval in RAG (When Grounding Fails)

RAG systems significantly reduce hallucinations—but only if retrieval works well.

When retrieval brings back irrelevant or shallow content, the LLM doesn’t have enough real context. So it compensates by filling the missing parts with imagination.

This leads to a special type of hallucination:

hallucinations that sound factual but aren’t grounded in the document.

Example:

If your vector database returns the wrong chunk for “Steel Connection Types,”

the AI may invent connection names or mix timber and steel terminology.

Types of Hallucination in AI (2026 Update)

Here’s a clear breakdown:

| Type | Meaning | Example |

|---|---|---|

| Factual Hallucination | AI gives incorrect facts | Wrong dimensions for aerospace parts |

| Logical Hallucination | Faulty reasoning | Wrong calculation steps |

| Instruction Hallucination | Misinterprets user instructions | Answers a different question |

| Visual Hallucination | Wrong interpretation in images | Misidentifying objects |

| Citation Hallucination | Fake references or studies | Non-existing links or papers |

This classification helps engineers and builders diagnose issues.

Real-World Risks of Hallucination in AI

Hallucinations are not just academic—they can be dangerous depending on the domain.

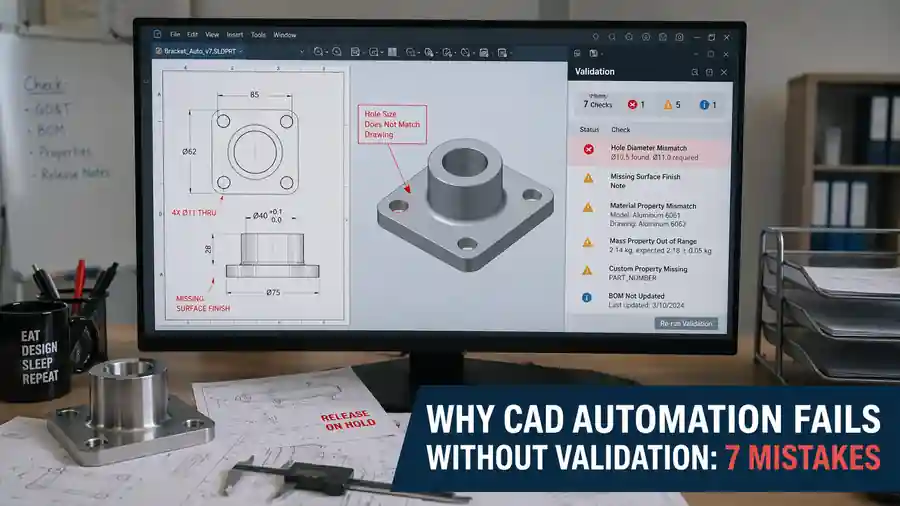

1. Engineering and CAD

-

Wrong parameters

-

Incorrect dimensions

-

Fabricated design standards

-

Invented API functions

-

Misinterpreted BOM rules

Impact:

Safety issues, rework, failed audits.

2. Healthcare

-

Wrong symptoms

-

Incorrect diagnosis

-

Non-existing diseases

Impact:

Serious safety risks.

3. Finance

-

Wrong tax laws

-

Wrong calculations

-

Incorrect compliance guidance

Impact:

Legal and monetary losses.

4. Legal and Compliance

-

AI invents case laws

-

Adds imaginary clauses

Impact:

Risky for organizations.

How to Reduce Hallucination in AI

Reducing hallucinations is one of the biggest engineering goals today.

Here are the most effective solutions:

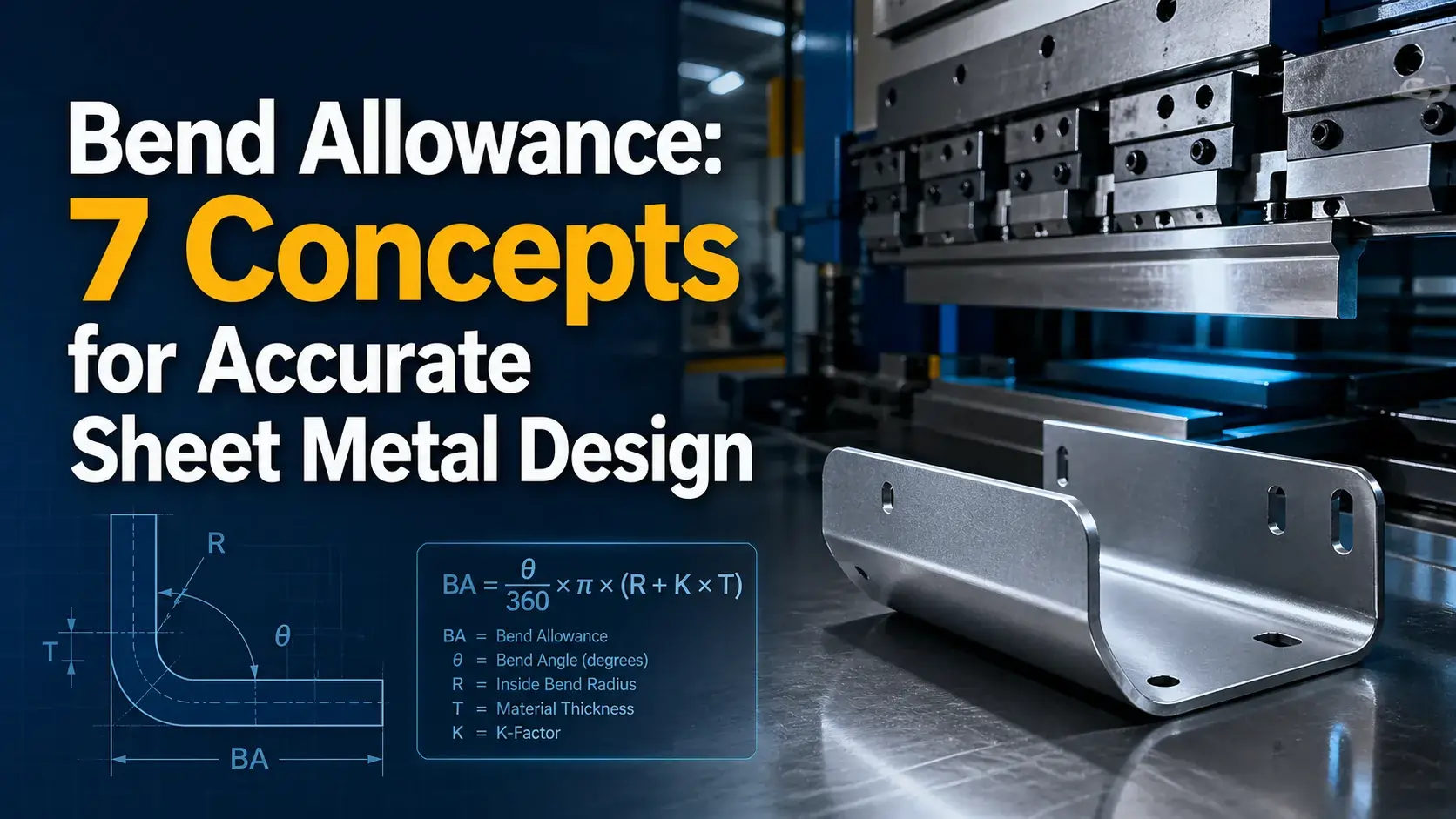

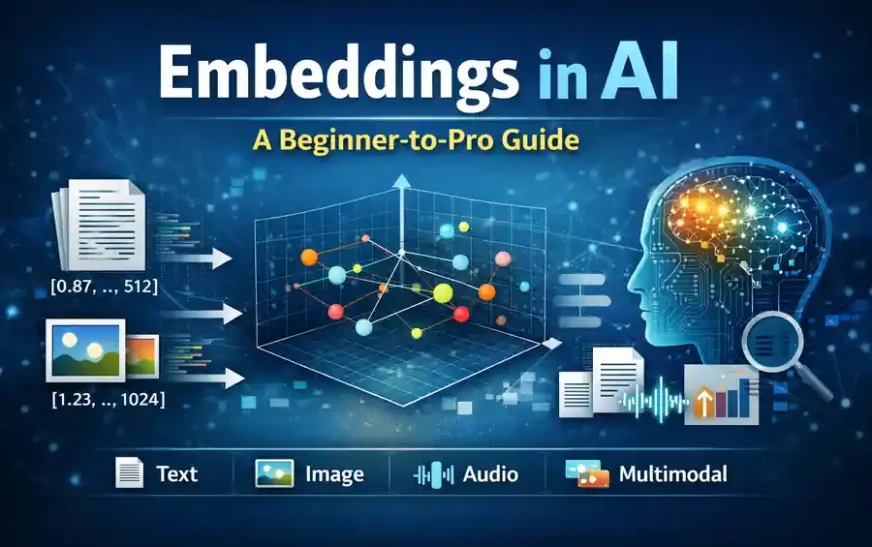

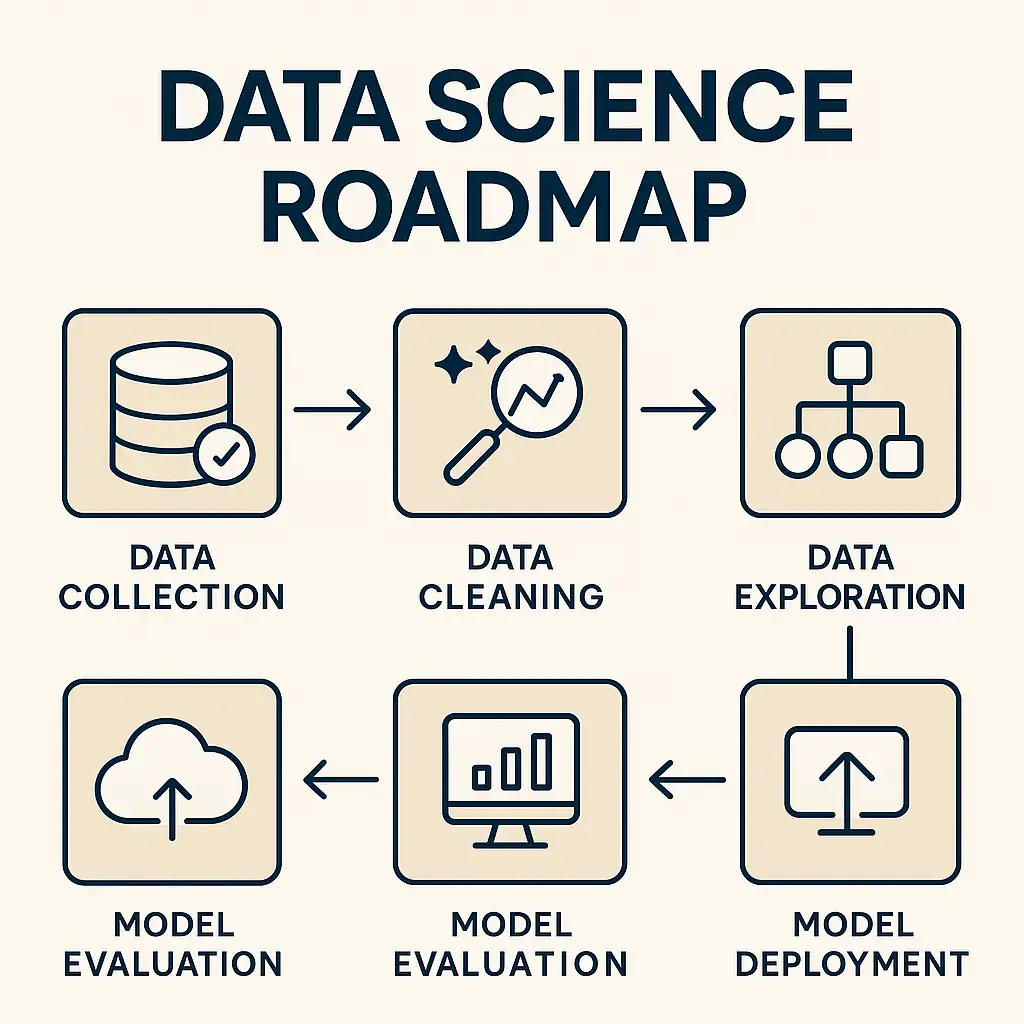

1. Use Retrieval-Augmented Generation (RAG)

RAG is the best solution in real-world AI projects.

How it works:

-

Break content into chunks

-

Convert them into embeddings

-

Store in a vector database

-

Retrieve relevant chunks

-

Provide them to the LLM

This grounds the model with real data.

RAG = Facts first, language generation second.

This single improvement reduces hallucinations by 40%–70% depending on domain.

2. Improve Prompt Quality

Good prompts reduce uncertainty.

Examples:

-

Provide accurate context

-

Give constraints

-

Add examples

-

Avoid vague instructions

3. Use Verified Sources

One of the most practical ways to reduce hallucination in AI is to ensure the model is grounded in verified, trustworthy, and up-to-date information. Language models naturally try to “fill the gaps” when they don’t have enough context. Verified sources act like a safety anchor—they provide a clear boundary for the model to stay within.

When you give the model real information, it becomes far less likely to invent details.

Why Verified Sources Reduce Hallucinations

-

They give the model factual anchors instead of depending on old training data.

-

They help the model avoid guessing or making assumptions when information is unclear.

-

They ensure the model quotes numbers, standards, or specifications that truly exist.

-

They prevent the “confident storytelling” problem where AI fills missing pieces.

Example from Engineering / CAD

If you ask AI:

“What is the maximum allowable deflection for this aluminum beam?”

Without a source, it may:

-

Produce the wrong value

-

Invent a formula

-

Refer to a non-existent code

-

Mix up units

But if you provide the source:

-

IS Codes

-

AISC manual

-

SolidWorks API documentation

-

Company design guidelines

…the model responds only within those boundaries.

How to Use Verified Sources in Practice

-

Include links or snippets from authoritative websites

-

Feed RAG pipelines with manuals, PDFs, SOPs, codes

-

Provide a structured knowledge base (JSON, HTML, Markdown)

-

Add namespace filters to avoid mixing irrelevant content

-

Use “only answer from the provided source” instructions

Mini Table: Good vs Poor Sources

| Source Type | Reliability | Example |

|---|---|---|

| Official standards | ★★★★★ | AISC, ISO, BIS |

| Company-approved manuals | ★★★★★ | Engineering SOPs |

| Trusted publications | ★★★★☆ | IEEE, ACM |

| Random blogs or forums | ★★☆☆☆ | Unknown engineering sites |

| Model’s assumptions | ★☆☆☆☆ | Hallucination zone |

Real Outcome

When you use verified sources, the model doesn’t need to guess. It simply pulls correct details from the factual material you provide.

This eliminates 50–80% of typical hallucinations in practical systems.

4. Add Evaluation Loops

Methods like:

-

Temperature reduction

-

Chain-of-thought validation

-

Self-checking models

-

Critic agent reviewing answers

5. Use Tool-Enabled Models

Modern LLMs can:

-

Fetch real-time data

-

Access APIs

-

Validate against tools

The more grounded the system, the fewer hallucinations appear.

Hallucination in AI vs Simple Errors

Sometimes people confuse errors with hallucinations.

Here’s the difference:

| Aspect | Hallucination | Error |

|---|---|---|

| Intent | AI fabricates confidently | Wrong due to miscalculation |

| Reason | Missing grounding | Incorrect logic |

| Common? | Very common | Less common |

| Fix | Better context/RAG | Fix logic/prompt |

AI Hallucination in RAG Systems

Even RAG systems can hallucinate if retrieval is weak.

Causes:

-

Poor chunking (too long/too short)

-

Wrong metadata

-

Old embeddings

-

Irrelevant matches

-

Namespace mismatches (very common)

-

Missing pages

-

Duplicate page content

Fixes:

-

Re-generate embeddings with correct settings

-

Clean HTML extraction

-

Add filters

-

Boost title metadata

-

Ensure deterministic chunking

-

Use minimum score thresholds

A clean RAG system = almost zero hallucinations.

Future of Reducing Hallucination in AI

The next generation of AI systems will look very different from what we use today. Instead of relying purely on prediction, future models will be designed to stay grounded in real information — almost like an AI that “checks its work” before speaking.

Here are the breakthroughs expected to shape AI reliability in 2026 and beyond:

1. Retrieval-First Models (LLMs with Knowledge Built Into Their Core)

Today’s LLMs generate text first and consult external knowledge only if you build a RAG pipeline around them.

But future models will flip this architecture.

A retrieval-first model will:

-

search before it answers

-

verify context before generating text

-

cross-check facts while forming sentences

-

prioritize accuracy over creativity

Think of it as an LLM with a built-in search engine and vector database — not an add-on.

This will dramatically reduce hallucinations because the model never answers from memory alone.

2. Real-Time Search Models (Always Connected to Live Information)

Instead of depending on outdated training cut-offs, next-gen AI will be grounded in live internet-indexed data.

These models will:

-

fetch real-time updates

-

cite current facts

-

avoid guessing about recent events

-

correct outdated assumptions automatically

For example, if a new engineering standard, tax regulation, or software release is published, the model will know instantly — not years later.

This eliminates a large portion of hallucinations caused by old or missing data.

3. Domain-Specific LLMs (AI With Verified, Specialized Knowledge)

General-purpose AI models try to know everything — which makes them more likely to hallucinate.

The future will have specialized, expert-level LLMs built for areas like:

-

medicine

-

engineering

-

aerospace

-

legal research

-

finance

-

manufacturing

These models will be trained or grounded on verified industry documents, not random internet text.

So when you ask a medical model about symptoms or an engineering model about material properties, it responds strictly within validated domain knowledge.

This approach replaces “creative answers” with “certified answers.”

4. Hybrid Models (Symbolic Reasoning + Neural Transformers)

This is the most exciting direction.

Current LLMs are great at language but not reasoning.

Symbolic systems, on the other hand, are great at logic but not language.

Hybrid models combine both:

Neural part:

-

understands natural language

-

generates coherent answers

-

handles variations, tone, and context

Symbolic part:

-

checks rules and constraints

-

validates logic

-

ensures factual consistency

-

corrects the neural output if something is off

It’s like having an AI with a creative brain plus an internal auditor.

These systems significantly reduce hallucinations because the symbolic layer refuses to allow impossible or logically inconsistent answers.

Conclusion

Hallucination in AI is not a bug—it’s a natural behavior of prediction-based models. But with the right techniques, especially RAG, controlled prompting, verification layers, and domain grounding, we can significantly reduce the risk.

As AI becomes central to engineering, healthcare, finance, and everyday productivity, understanding and managing hallucinations is essential for building trustworthy systems.

If you want to explore more AI fundamentals, RAG techniques, and practical engineering applications, check out the other guides on The Tech Thinker.

Related Articles

External Reference

Frequently Asked Questions on Hallucination in AI

1. What is a hallucination in AI?

A hallucination in AI happens when a model gives an answer that appears correct but is actually wrong, fabricated, or not grounded in real data. It occurs because the system predicts text rather than verifying facts.

2. Why do AI models hallucinate even in 2026?

Even advanced 2026 models still produce hallucination in AI scenarios because they rely on probability, incomplete context, outdated data, and pattern prediction. Without grounding, they try to “fill the gaps.”

3. Are hallucinations the same as AI errors?

No. An AI error is simply a wrong answer.

A hallucination in AI goes further — the model invents details, references, or explanations that never existed, often with high confidence.

4. How common is hallucination in AI?

Hallucination in AI is still common across all large language models. Depending on the domain, it appears in 5% to 40% of answers when the model isn’t grounded with retrieval or verified data.

5. Why do hallucinations sound very confident?

A hallucination in AI often sounds confident because LLMs are trained to produce fluent language. The tone reflects language skill, not factual accuracy.

6. Can hallucination in AI be completely eliminated?

Not fully. But hallucination in AI can be significantly reduced using better prompts, retrieval systems (RAG), verified sources, and domain-specific training.

7. Does Retrieval-Augmented Generation (RAG) reduce hallucination in AI?

Yes. RAG is one of the best ways to reduce hallucination in AI because it forces the model to use your actual documents or database instead of guessing or fabricating answers.

8. Does hallucination in AI affect technical fields like engineering?

Absolutely. Hallucination in AI can be dangerous in engineering because the model might invent formulas, mix up materials, or misinterpret standards. Accuracy is critical in CAD, aerospace, and manufacturing.

9. Can hallucination in AI happen even when prompts are very clear?

Yes. A hallucination in AI can occur even with perfect prompts if:

-

the model lacks domain expertise

-

the training data is outdated

-

the answer doesn’t exist in its knowledge base

10. Is hallucination in AI caused by outdated training cutoff dates?

Partly. If a model doesn’t know about new events, technology updates, or standards, it may generate a hallucination in AI by inventing information to complete the answer.

11. How can users reduce hallucination in AI when prompting?

You can reduce hallucination in AI by:

-

giving clear, complete context

-

providing sources or references

-

using step-by-step instructions

-

restricting the model to your provided data

12. Why does hallucination in AI increase during coding or math tasks?

Coding and math require strict logic.

A hallucination in AI occurs when the model guesses patterns, invents functions, or approximates formulas instead of applying exact rules.

13. Do larger models produce less hallucination in AI?

Generally yes, but size alone doesn’t solve everything. Larger models reduce hallucination in AI, but grounding, retrieval, and domain-specific knowledge matter more.

14. Will future AI models (2026–2028) lower hallucination in AI further?

Yes. Technologies like retrieval-first LLMs, hybrid reasoning systems, and domain-verified AI will significantly reduce hallucination in AI over the next few years.

15. Can hallucination in AI be dangerous?

Yes. A hallucination in AI can be risky in fields like healthcare, finance, law, and engineering. Wrong facts or invented details can lead to compliance issues, safety problems, or wrong decisions.