Introduction to Embeddings in AI (Why Embeddings in AI Matter in 2026)

Embeddings in AI power almost everything around us today.

When you search something online, talk to a chatbot, categorize documents, detect duplicates, or build a recommendation engine—embeddings are silently doing the heavy lifting.

In 2026, with models like OpenAI, Google, Cohere, and multimodal systems dominating the AI landscape, embeddings have evolved from a technical concept into a core layer of intelligence.

This guide gives you a complete, practical, beginner-friendly understanding of embeddings—without math overload—so you can actually use them in your applications, chatbots, RAG systems, engineering workflows, and search pipelines.

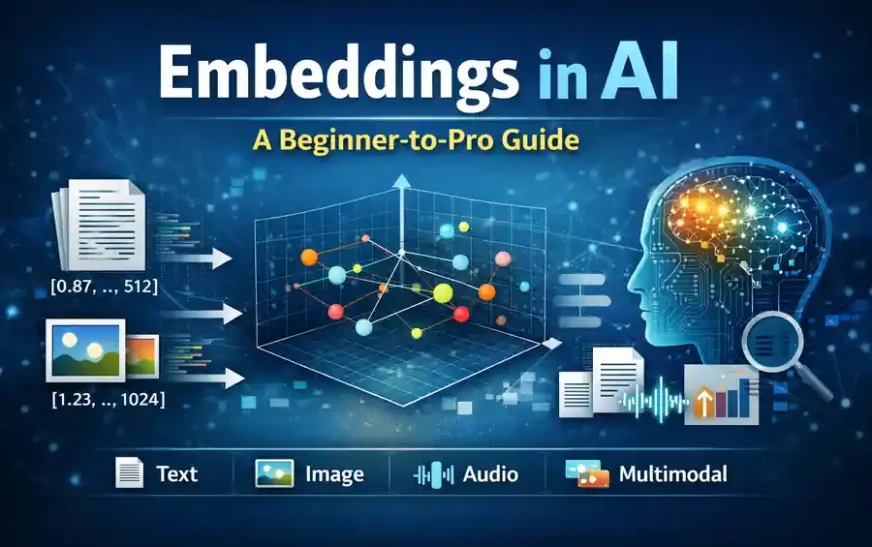

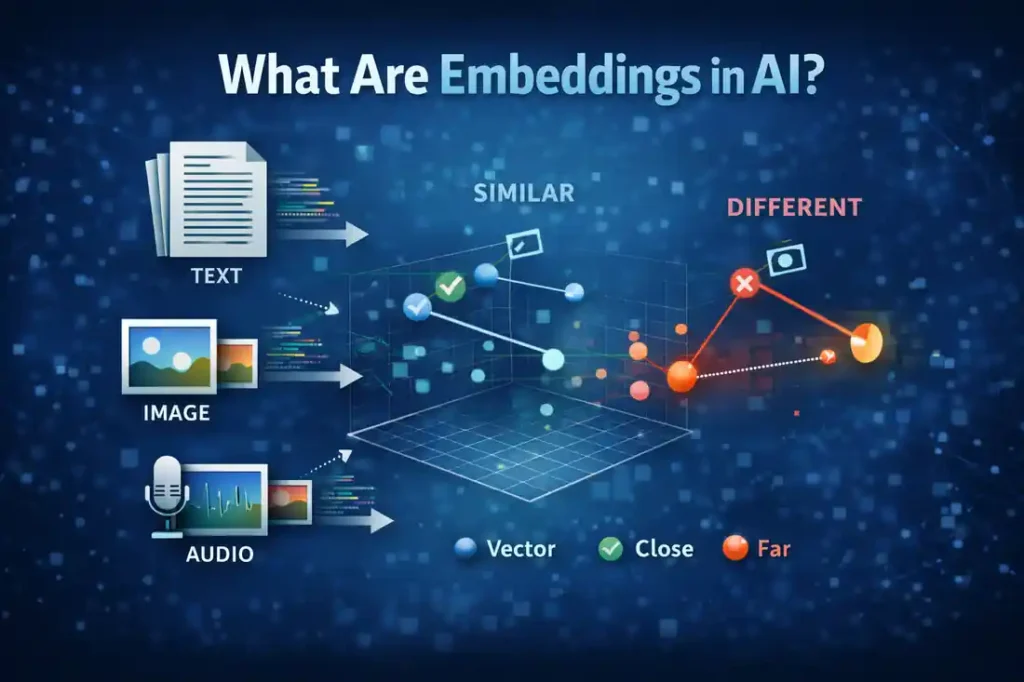

What Are Embeddings in AI?

Embeddings in AI are mathematical representations of meaning.

They convert words, images, documents, or audio into vectors (lists of numbers) that machines can understand.

A simple way to understand:

If two things are “similar,” their embeddings will be:

✔ Close to each other in vector space

❌ Not identical, but meaningfully related

Example:

“car” and “vehicle” → close vectors

“car” and “banana” → far apart

Quick Table

| Term | Simple Meaning |

|---|---|

| Embedding | Numeric meaning of data |

| Vector | List of numbers |

| Dimension | Length of the vector (ex: 768) |

| Similarity | How close two meanings are |

Embeddings are NOT about memorizing words—they capture semantic meaning.

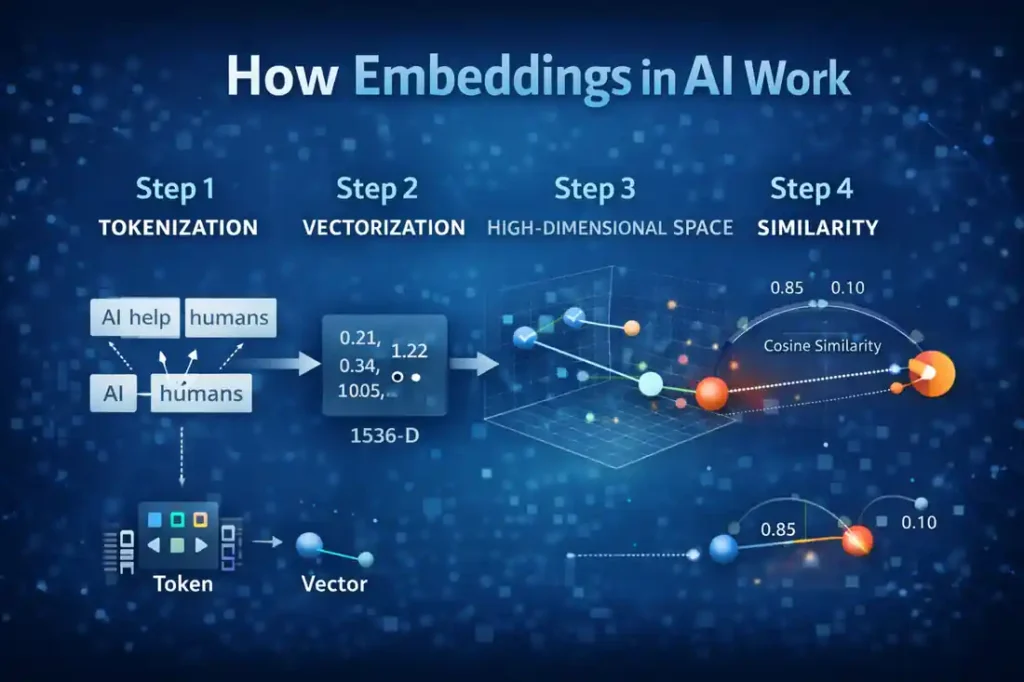

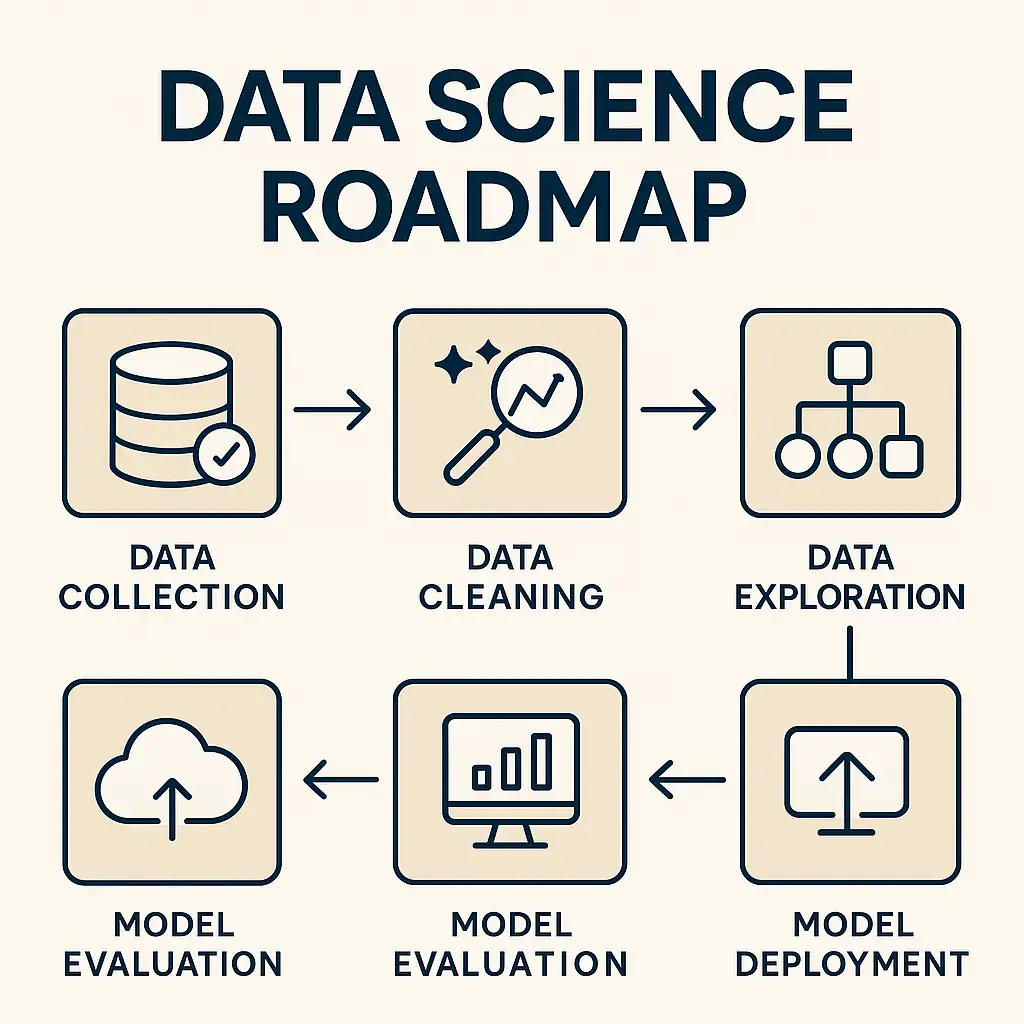

How Embeddings in AI Work (Tokenization to Meaning)

Embeddings follow a simple pipeline:

Step 1 — Tokenization

The text is broken into smaller pieces (tokens).

Example:

“AI helps humans” → [“AI”, “helps”, “humans”]

Step 2 — Vectorization

A model converts each token or sentence into a vector like:

[0.21, -0.34, 1.12, 0.05, ... 1536 dimensions]

These numbers encode meaning.

Step 3 — High-Dimensional Space

You can imagine a huge 768-dimensional or 1536-dimensional map.

Words with similar meaning stay close on this map.

Step 4 — Similarity Score

The system computes how “close” two vectors are using cosine similarity.

Similarity 0.85 → very similar

Similarity 0.10 → unrelated

This is the magic behind AI search and chatbots.

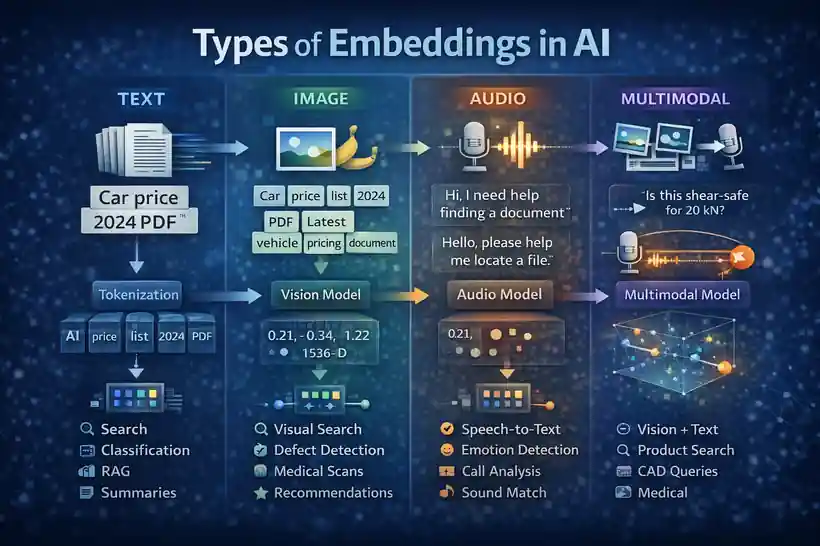

Types of Embeddings in AI (Text, Image, Audio, Multimodal)

Embeddings in AI come in different forms depending on the type of data you want the model to understand. Even though the formats vary—text, images, audio, videos—the foundational idea remains the same:

👉 Convert raw data into numerical meaning.

👉 Place similar items closer in vector space.

Below is a practical, 2026-level explanation of each embedding type with real-world examples.

Text Embeddings in AI (The Most Common Type)

Text embeddings convert words, sentences, paragraphs, or full documents into dense vectors that capture their semantic meaning.

This is the backbone of:

-

Semantic search

-

Document classification

-

Chatbot memory

-

Duplicate detection

-

Topic clustering

Why Text Embeddings Matter

Unlike keyword search, which matches exact words, embeddings capture context and intent.

Example:

-

“Car price list 2024 PDF”

-

“Latest vehicle pricing document”

These phrases use different words but have the same meaning — embeddings easily detect this.

How They Work

Text → tokens → embedding model → vector representation

Example vector:[-0.021, 0.845, -0.214, … 1536 dimensions]

Practical Use Cases

-

Knowledge base search

-

Legal document similarity

-

Engineering rule retrieval

-

Blog recommendation

-

FAQ matching

-

Email classification

Usecase comparision

| Use Case | Why Embeddings Help |

|---|---|

| Search | Finds results by meaning, not words |

| Classification | Groups similar queries/documents |

| RAG | Retrieves accurate context chunks |

| Summaries | Understands topic structure |

Image Embeddings in AI (Understanding Visual Meaning)

Image embeddings capture the visual meaning of an image, not just its pixels.

They understand:

-

Shapes

-

Objects

-

Texture

-

Edges

-

Style

-

Background context

How Image Embeddings Work

An image is passed through a vision model that extracts features → converts them into a high-dimensional vector.

Features can include:

-

Curvature

-

Contrasts

-

Corners

-

Color patterns

-

Object boundaries

Practical Use Cases

-

Visual search (“find similar images”)

-

Defect detection in manufacturing

-

Medical scan comparison

-

Product catalog matching

-

Style-based recommendations

-

Quality inspection in engineering

Example

If you upload a steel connection image, embeddings can find visually similar connections—even with different sizes, angles, or lighting.

Audio Embeddings in AI (Capturing Sound Meaning)

Audio embeddings represent speech or sound patterns in vector form.

They capture things like:

-

Tone

-

Speed

-

Emotion

-

Speaker identity

-

Accent

-

Rhythm

What They Enable

-

Speech-to-text accuracy

-

Emotion detection

-

Voice-based recommendations

-

Sound classification

-

Customer call analysis

-

Duplicate clip detection

Practical Example

You say: “Hi, I need help finding a document.”

Someone else says: “Hello, please help me locate a file.”

Different wording + different voice → same intent.

Audio embeddings detect this instantly.

Multimodal Embeddings in AI (The Future of AI Understanding)

Multimodal embeddings combine text + image + audio into a single semantic space.

This means the model can understand:

-

A text query

-

A picture

-

An audio note

-

A mixture of all three

—in one unified meaning.

Why Multimodal Embeddings Are Important in 2026

Models like OpenAI and Google use multimodal embeddings to handle complex inputs such as:

-

“What is this component?” + image

-

“Fix this error” + screenshot

-

“Compare this drawing with last revision”

-

“Explain this chart”

Practical Use Cases

-

Vision + text chatbots

-

Product search (“show similar items”)

-

CAD model understanding + text queries

-

Engineering drawing comparison

-

Social media content moderation

-

Medical image + doctor notes analysis

Example

You upload a picture of a bolt connection and ask:

“Is this shear-safe for 20 kN?”

A multimodal model uses embeddings from both text + image to respond.

Comparison of — Types of Embeddings in AI

| Type | Input | Learns Meaning From | Best For |

|---|---|---|---|

| Text Embeddings | Words, sentences | Context & intent | Search, RAG, Q&A |

| Image Embeddings | Photos, drawings | Visual features | Vision search, QC |

| Audio Embeddings | Speech, sound | Tone, patterns | Speech AI, call bots |

| Multimodal Embeddings | Text + Images + Audio | Combined signals | Complex chatbots, engineering workflows |

Why Embeddings in AI Are So Important in 2026

Embeddings are the backbone of:

-

Semantic search

-

Chatbots

-

RAG (Retrieval Augmented Generation)

-

Document similarity

-

Fraud detection

-

Code understanding

-

Recommendation engines

-

Duplicate content detection

-

Multilingual understanding

Embeddings replaced old keyword systems.

Now AI understands meaning, not just words.

Embeddings in RAG (Retrieval-Augmented Generation)

Embeddings are the heart of RAG.

If embeddings are bad, RAG results are bad.

Chunking Strategy

-

Split content into small, meaningful chunks

-

Generate embeddings for each chunk

Good chunking = high-quality retrieval.

Vector Databases

RAG uses vector databases like:

-

Pinecone

-

Chroma

-

Weaviate

These store embeddings and perform fast similarity search.

How Embeddings Reduce Hallucination

Better embeddings → better context → fewer hallucinated answers.

Cosine Similarity in Embeddings in AI

Cosine similarity measures the angle between two vectors.

Not distance—angle.

Why?

Because angle tells correlation of meaning.

Simple Example

-

“France capital”

-

“Paris city info”

Similarity: 0.91 → high meaning overlap

When Cosine Fails

-

Very large sentences

-

Contradicting topics

-

Low-quality embeddings

How to Choose the Right Embedding Model in 2026

Different models = different dimensions, costs, accuracy.

| Model | Dim | Strength | Use Case |

|---|---|---|---|

| OpenAI text-embedding-3 | 1536 | best overall | RAG, search |

| Cohere embeddings | 1024 | fast | semantic search |

| Google embeddings | 768 | multimodal | vision + text |

| Local: BGE | 768 | offline | privacy projects |

Key metrics to consider:

-

Cohesion

-

Separation

-

Noise resistance

-

Multilingual support

-

Cost per 1000 tokens

How Companies Use Embeddings in AI (Real World Use Cases)

Search Engines

Semantic indexing

Query expansion

Better ranking

E-Commerce

Product similarity

Personalized recommendations

Customer Support BOTS

Retrieve similar tickets

Suggest solutions

Engineering Workflows

Technical document search

CAD rule extraction

Drawing comparison

QA automation

Internal Link Suggestion:

→ Link to your “SolidWorks Automation” or ML content.

Visualizing Embeddings in AI (t-SNE, UMAP)

Embeddings are high-dimensional.

To visualize, we use t-SNE or UMAP to reduce dimensions to 2D.

-

t-SNE → preserves local structure

-

UMAP → preserves global + local

Useful for:

-

Clustering

-

Outlier detection

-

Model debugging

Common Mistakes When Using Embeddings in AI

Even though embeddings in AI are powerful, many systems fail to produce accurate results because of a few common but critical mistakes. These issues silently affect RAG accuracy, search quality, and overall user experience.

Below are the mistakes you should avoid — each explained with practical examples.

1. Chunk Size Too Large (or Too Small)

Chunking is one of the most overlooked steps in building an embedding pipeline.

If your chunk size is not optimal, your model cannot extract meaning correctly.

Why this is a mistake

-

Large chunk = diluted meaning

-

Small chunk = missing context

-

Both reduce retrieval accuracy

Example

A 2,000-word technical document chunked into 1 huge block →

Embedding cannot focus on important points → RAG retrieves irrelevant content.

A paragraph split into tiny 10-word chunks →

Model loses structure → answers become inconsistent.

✔️ Best Practice

-

200–350 tokens per chunk

-

10–15% overlap

-

Keep each chunk semantically self-contained

2. Choosing the Wrong Embedding Model

Not all embedding models perform equally.

Mistake

Using a general-purpose embedding model for domain-specific data such as:

-

Engineering drawings

-

Legal documents

-

Medical reports

-

CAD rules

-

Financial statements

General models → poor recall and meaning mismatch.

✔️ Best Practice

Choose the model based on:

-

Domain

-

Cost

-

Dimension

-

Language

-

Latency

-

GPU/CPU availability

For example:

A model optimized for short consumer text is not ideal for engineering manuals.

3. No Normalization Before Storing Embeddings

Many developers skip normalization, assuming embedding models already handle it — but this step is critical.

Why It Matters

Unnormalized vectors have varying magnitudes.

This affects similarity scoring and ranking.

✔️ Best Practice

Normalize embeddings using:

-

L2 normalization

-

Standardized vector norms

This ensures:

-

Stable similarity scoring

-

Better clustering

-

More consistent retrieval

Vector databases like Pinecone and Weaviate automatically normalize — but local implementations often forget this step.

4. Using Keyword Search as a Fallback (Without Hybrid Logic)

Some pipelines use keyword fallback when embeddings fail.

But keyword search ≠ semantic understanding.

Mistake

“Keyword fallback” leads to irrelevant matches when:

-

Users enter spelling mistakes

-

Synonyms are used

-

Technical words vary

Example:

“welding defect guide” ≠ “fusion flaw procedure” but keyword search treats them as different.

✔️ Best Practice

If fallback needed → use hybrid search

-

BM25 + embeddings

-

Weighted scoring

-

Strict thresholding

Hybrid search improves accuracy significantly without losing recall.

5. Using the Wrong Distance Metric

Many developers don’t realize that choosing the wrong distance metric completely changes retrieval accuracy.

❌ Common Wrong Choices

-

Euclidean distance (poor for high dimensions)

-

Manhattan distance (too strict)

✔️ Best Choices for Embeddings in AI

-

Cosine similarity (best overall)

-

Dot-product similarity (for normalized vectors)

Example

Two sentences with opposite tone but similar meaning may have high cosine similarity but misleading Euclidean distance.

6. Ignoring Domain-Specific Stopwords

General NLP stopwords do not apply to domain-specific content.

❌ Example

In engineering documents, words like:

-

flange

-

torque

-

assembly

-

weld

-

revision

…are NOT stopwords — but generic models treat frequent words as low importance.

⚠️ Result

Embeddings may lose critical signals → RAG missing key sentences → Hallucination increases.

✔️ Best Practice

Create a domain-friendly stopword list:

-

Keep important technical terms

-

Ignore non-informative words

-

Retain numbers, units, IDs (e.g., M20, 24 mm, Rev B)

This dramatically improves embedding accuracy in engineering-heavy workflows.

Mistakes & Fixes in AI embeddings

| Mistake | Why It Hurts | Fix |

|---|---|---|

| Chunk too large | Dilutes meaning | 200–350 tokens + overlap |

| Wrong model | Poor accuracy | Pick model based on domain |

| No normalization | Unstable similarity scoring | L2 normalize vectors |

| Keyword fallback | No semantic relevance | Hybrid search |

| Wrong metric | Wrong ranking | Cosine or dot-product |

| Ignoring stopwords | Lost domain meaning | Custom stopword list |

Conclusion — Mastering Embeddings in AI in 2026

Embeddings in AI are now the core layer of modern intelligence.

If you understand embeddings, you understand:

-

How LLMs retrieve information

-

How RAG reduces hallucinations

-

How AI systems deliver accurate results

-

How semantic search works

-

How modern chatbots perform

This guide sets the foundation for your next steps:

→ Chunking

→ Vector search

→ RAG pipelines

→ Production deployment

External Reference

Related Articles

FAQ on Embeddings in AI

1. What are Embeddings in AI?

Embeddings in AI are numerical representations that help machines understand meaning, similarity, and intent in text, images, audio, and multimodal data. Instead of matching exact words or pixels, embeddings capture semantic relationships and convert them into high-dimensional vectors.

Key points:

-

Represent meaning, not keywords

-

Used in search, RAG, clustering

-

Work across text, vision, and audio

-

Enable semantic ranking of information

-

Foundation of modern AI systems

2. How do Embeddings in AI work?

Embeddings work by converting raw input into tokens, passing them through a neural model, and generating a meaningful vector. This vector places similar content close together in vector space, allowing AI to understand context beyond surface-level words.

Key points:

-

Tokenization → vectorization pipeline

-

High-dimensional meaning space

-

Cosine similarity for comparison

-

Semantic grouping of content

-

Works across different data types

3. Why are Embeddings in AI important in 2026?

In 2026, AI systems depend on embeddings more than ever because traditional keyword systems fail to capture real intent. Embeddings enable smarter search, more accurate chatbots, and better contextual understanding in enterprise applications.

Key points:

-

Reduce hallucinations in LLMs

-

Improve accuracy of RAG systems

-

Enable multilingual understanding

-

Support multimodal AI

-

Power vector databases and semantic search

4. What are Text Embeddings in AI?

Text embeddings represent words, phrases, or documents as vectors based on meaning. They help systems understand the relationships between ideas, even when different words are used.

Key points:

-

Best for search and document retrieval

-

Capture synonyms and semantic patterns

-

Useful for clustering and tagging

-

Improve FAQ matching accuracy

-

Essential for knowledge-based chatbots

5. What are Image Embeddings in AI?

Image embeddings extract visual features—shapes, edges, textures, and objects—to understand what appears in an image. They convert the picture into a vector that reflects its visual meaning.

Key points:

-

Used in visual search systems

-

Help detect similarities in product catalogs

-

Support quality inspection workflows

-

Enable screenshot-based queries

-

Power multimodal chatbots and apps

6. What are Audio Embeddings in AI?

Audio embeddings represent spoken language, tone, rhythm, and speaker characteristics as vectors. They enable AI to analyze emotion, intent, and meaning in audio recordings.

Key points:

-

Used in speech-to-text enhancement

-

Detect speaker identity

-

Understand sentiment and tone

-

Improve call-center analytics

-

Power voice assistants

7. What are Multimodal Embeddings in AI?

Multimodal embeddings combine text, images, and audio into one unified vector space. This allows AI models to answer questions about images, analyze screenshots, and correlate visuals with written or spoken input.

Key points:

-

Support text+image search

-

Enable visual QA bots

-

Essential for advanced assistants

-

Used in product recommendations

-

Backbone of modern vision-language models

8. How does cosine similarity help Embeddings in AI?

Cosine similarity measures the angle between two embedding vectors, indicating how similar their meaning is. It helps rank results by relevance, making search and RAG outputs more accurate.

Key points:

-

Score ranges from -1 to 1

-

Higher score = more similar

-

Works well for high-dimensional vectors

-

Not affected by vector length

-

Industry-standard similarity metric

9. What is the ideal chunk size for Embeddings in AI?

Chunk size determines how much text is encoded at once. If chunks are too large, meaning becomes diluted; if too small, context gets lost. A balanced chunk ensures accurate retrieval.

Key points:

-

200–350 tokens recommended

-

10–15% overlap maintains flow

-

Smaller chunks lead to fragmentation

-

Larger chunks reduce precision

-

Essential for high-quality RAG

10. Which embedding model is best for 2026?

The best embedding model depends on your workload—general text, technical data, images, or multimodal tasks. Newer models offer higher quality and better semantic consistency.

Key points:

-

High-dimension = better precision

-

Local models for privacy needs

-

Multimodal models for image+text

-

Domain-specific models for technical fields

-

Evaluate cost, latency, and accuracy

11. How are Embeddings in AI used in RAG?

In RAG systems, embeddings convert documents into vectors stored inside a vector database. When a question is asked, the system fetches the closest vectors to supply grounded context.

Key points:

-

Enables factual, context-aware answers

-

Prevents hallucination

-

Improves retrieval precision

-

Faster than keyword search

-

Works well with long documents

12. How do Embeddings in AI differ from keyword search?

Keyword search matches exact words, while embeddings match meaning. Even if the user uses different wording, embeddings ensure the system retrieves relevant content.

Key points:

-

Understand synonyms

-

Handle spelling errors

-

Work across languages

-

Capture context & intent

-

Better for long-form content

13. Why do embeddings sometimes fail?

Embeddings fail when chunking is wrong, low-quality models are used, or domain-specific signals are ignored. This leads to irrelevant matches or missing context.

Key points:

-

Wrong distance metric

-

Incorrect chunk size

-

Missing normalization

-

Poor domain adaptation

-

Outdated embedding models

14. Can Embeddings in AI be stored locally without cloud services?

Yes, you can deploy embeddings fully offline using local vector stores like FAISS, Chroma, or Weaviate. This is common in engineering, healthcare, and confidential enterprise environments.

Key points:

-

Zero cloud dependency

-

High privacy

-

No per-token cost

-

Fast local retrieval

-

Suited for sensitive data domains

15. How do Embeddings in AI reduce hallucinations in LLMs?

By grounding LLMs with accurate, similar chunks retrieved via embeddings, hallucinated responses reduce drastically. The model answers based on real documents, not assumptions.

Key points:

-

Improves grounding & context accuracy

-

Allows fact-based responses

-

Reduces confidence-based errors

-

Strengthens evidence support

-

Boosts search reliability